Run Data Feed Forward over Unified Servers#

About this tutorial#

Data Feed Forward(DFF) allows collection of test data from different test stages to be transmitted to other regions or OSAT’s ACS Unified Servers. By using DFF, you can design a test process where one AUS serves as the wafer sort test insertion. After executing the wafer sort test program, the test data is transferred to another AUS, which functions as the final test insertion. During the final test, the program references the previous wafer sort data to enhance test accuracy, thereby improving both production efficiency and product quality.

Purpose#

In this tutorial, you will learn how to:

Enable DFF write on the first Host Controller and execute the SMT8 test program to upload demo DFF data by TPI to the first AUS. Once the test data is successfully uploaded, it will be transferred to the second AUS via the DFF cloud, following the predefined data transfer rules. After the data transfer is complete, run the SMT8 test program on the second Host Controller while the DFF read application is active on the second AUS.This application will read the DFF data from the second AUS and respond to the second Host Controller. Finally, the SMT8 test program on the second Host Controller will print the DFF data to the EWC console.

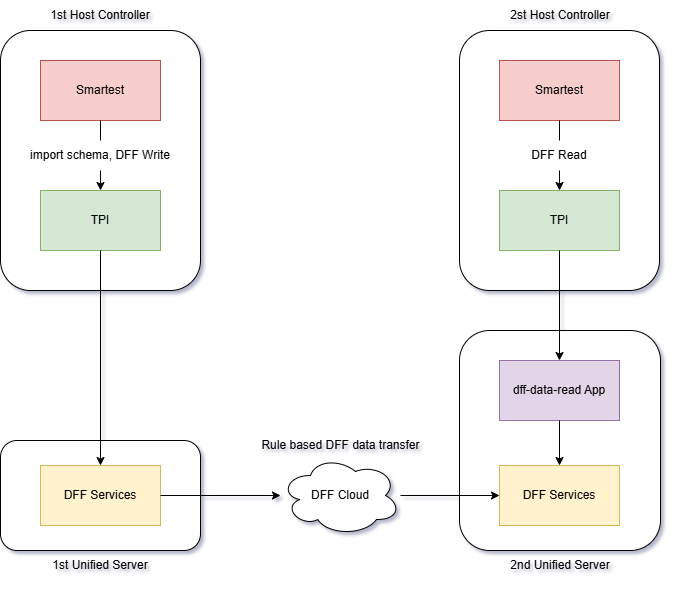

The diagram below illustrates DFF data stream between VMs:

Compatibility and Prerequisites#

System Compatibility#

SmarTest 8 / RHEL74 / Nexus 3.0.0 / Edge 3.3.0-prod / Unified Server 2.2.0

Prerequisites#

Two Container Hub(https://hub.advantest.com/) accounts are required:

Two organization(org) accounts configured with the App Descriptor project are required: one org administrator (admin) account and one standard user account.

A project in the ACS Container Hub is also required.

DFF UI(https://dff.unified.advantest.com/) account is required.

Environment Preparation#

Note: The ‘acsqa-dpat-gemini’ project we are using below is for demonstration purposes. You will need to replace it with your own project accordingly.

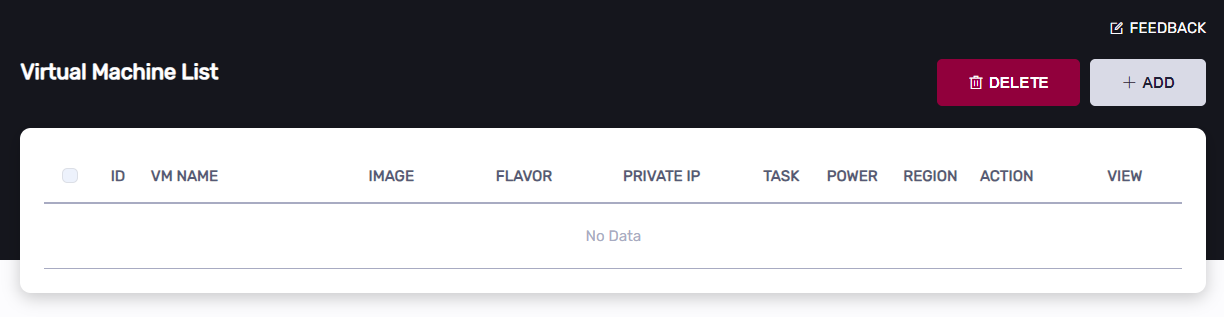

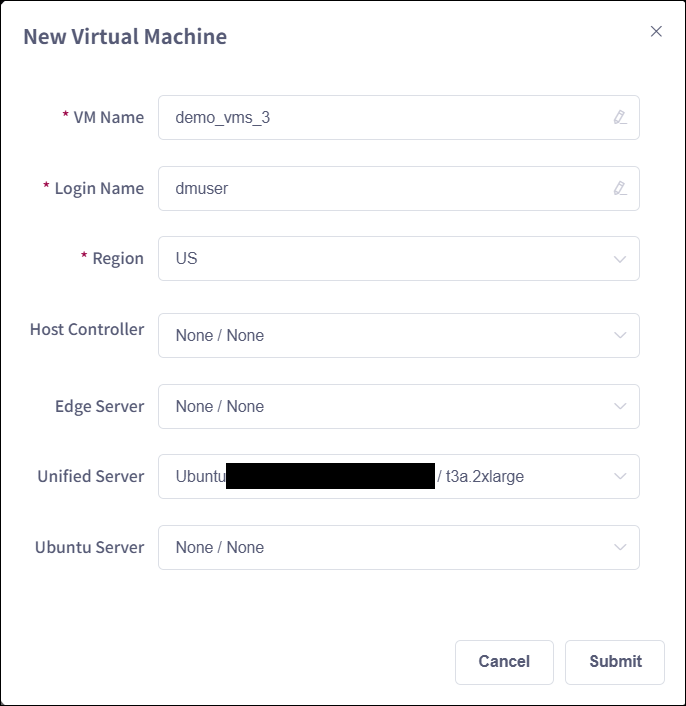

Create Essential VMs for RTDI#

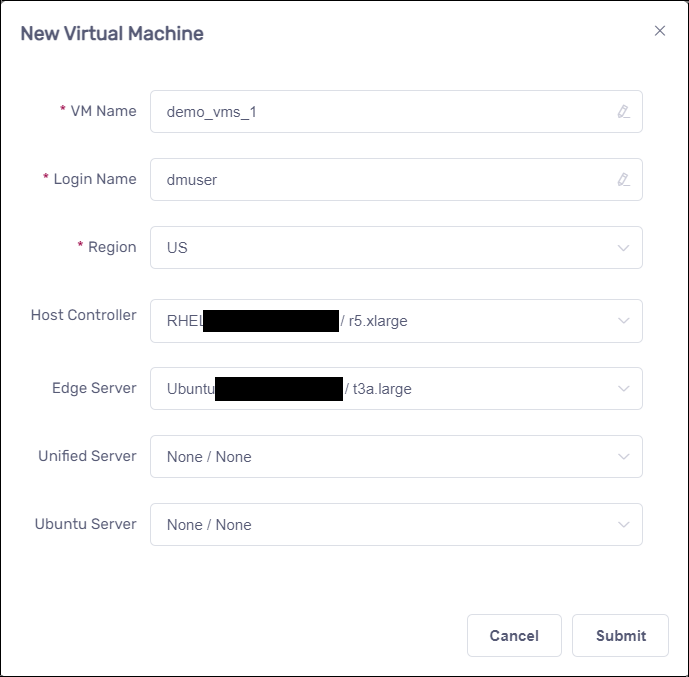

Click the “Add” button to add VMs:

Create 2 set of Host Controllers and Edge Servers#

Create 2 Unified Servers, each one will work with a separate Host Controller.#

Configure 1st Unified Servers as DFF data transfer agent#

Configure Unified Server by the following

Configure 2nd Unified Servers as DFF data consumer server#

Configure Unified Server by the following

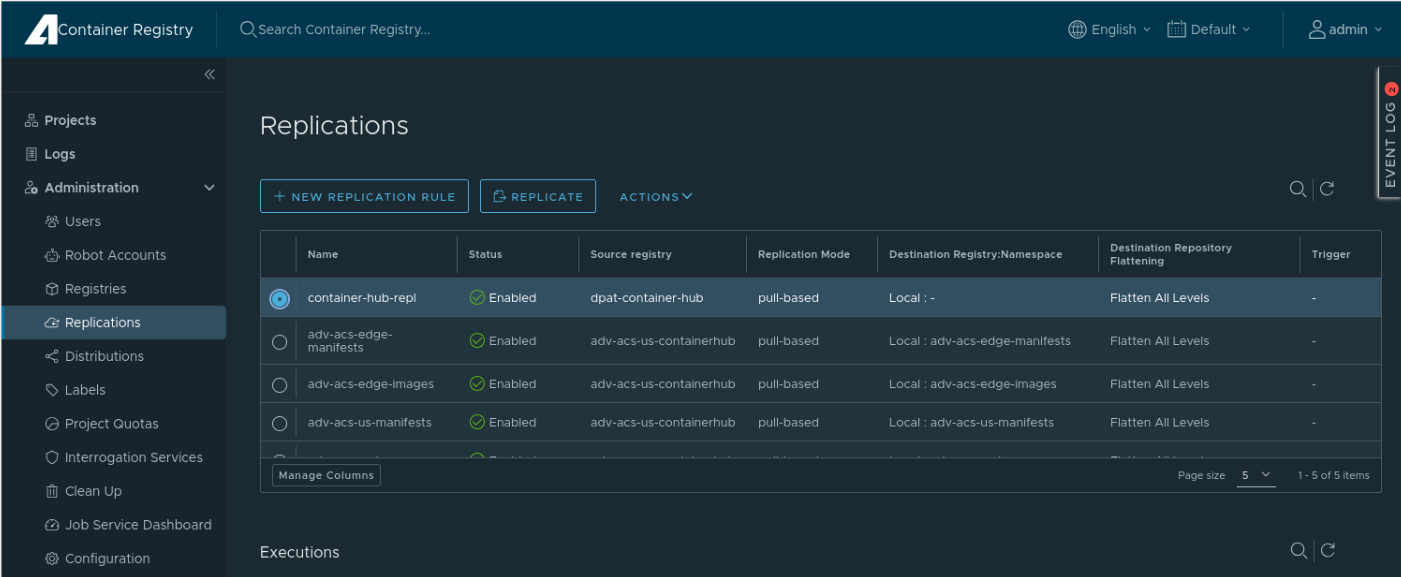

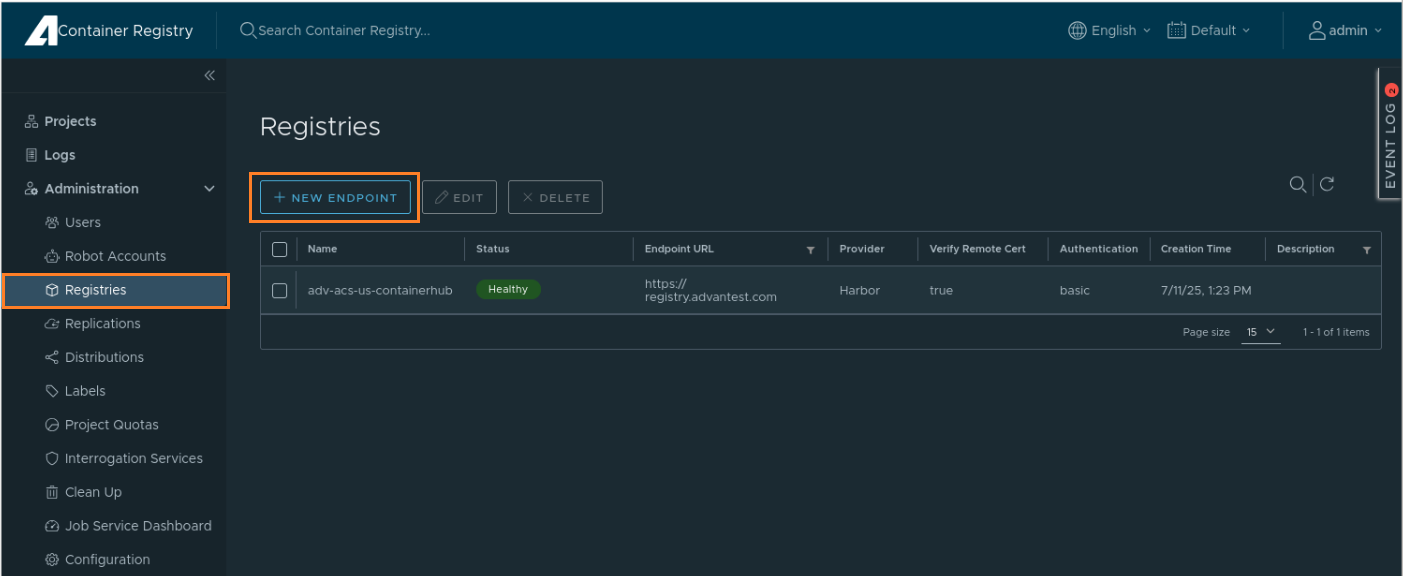

Replicate projects from Container Hub to each Unified Server on Habor

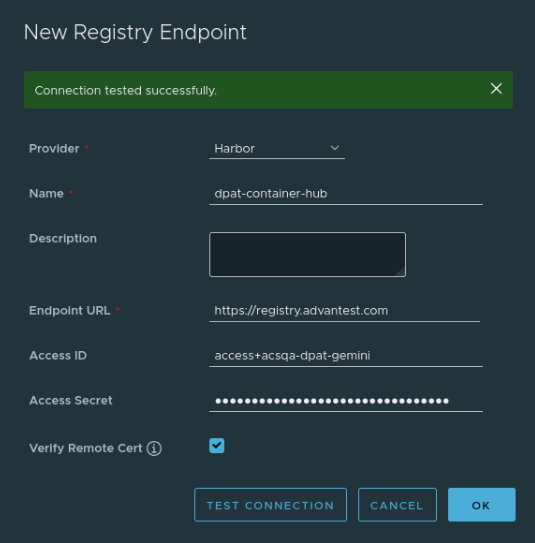

Create new endpoint

Click “NEW ENDPOINT” in “Registries” page

In the popped up “New Registry Endpoint” dialog, input these entries:

Name: “dpat-container-hub”

Endpoint URL: “https://registry.advantest.com”

Access ID: org user account

Access Secret: the account’s docker secret

Then click “TEST CONNECTION”, after that you will see “Connection Tested successfully” on the top of the dialog, click “OK” to submit the new endpoint to Harbor finally.

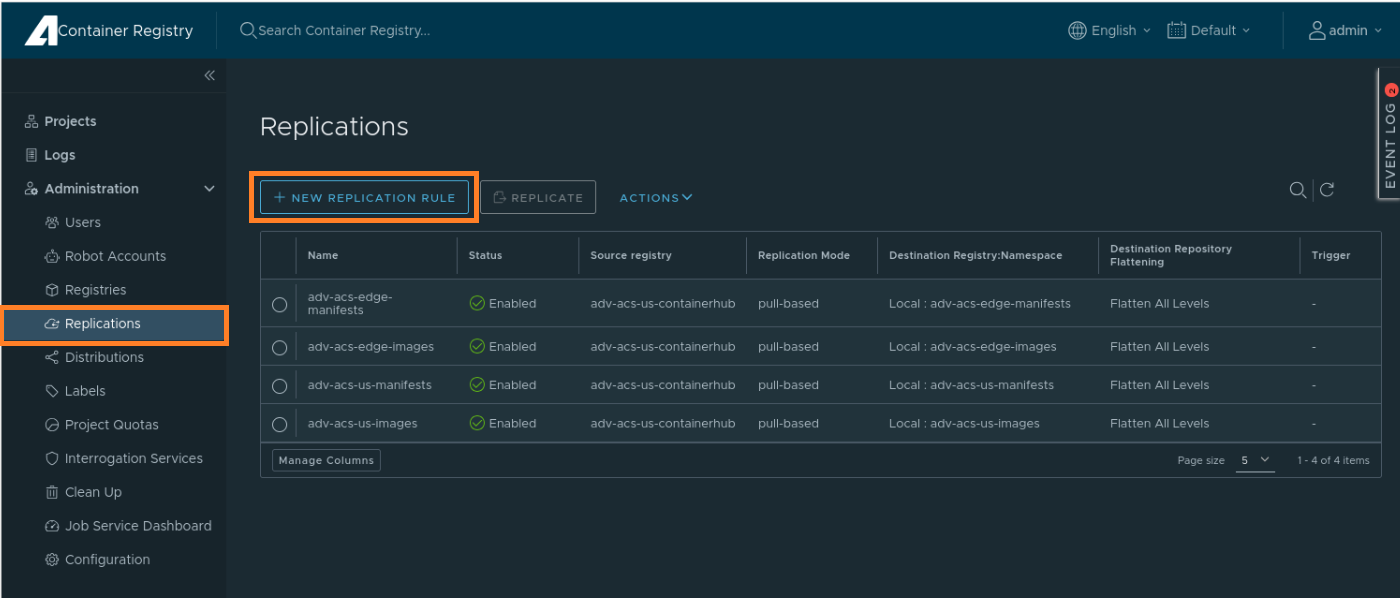

Create new replication rule

Click “NEW REPLICATION RULE” in “Replications” page

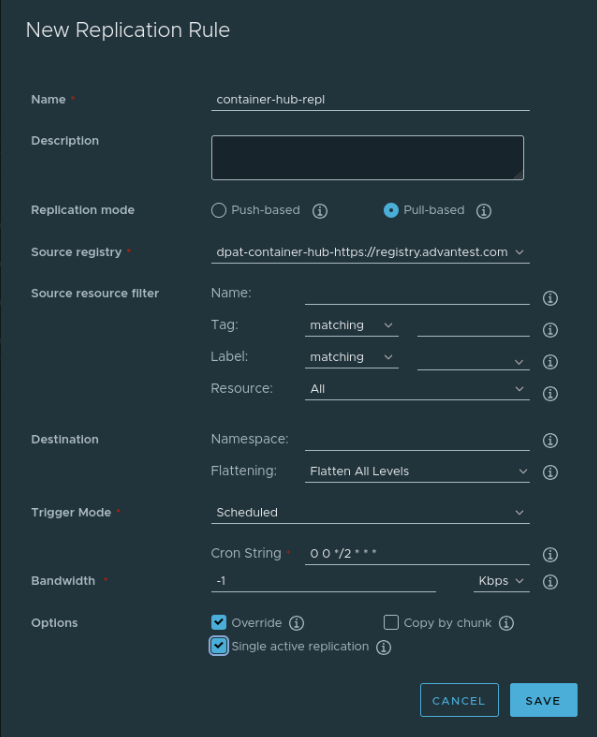

In the popped up “New Replication Rule” dialog, input or select these entries:

Name: input “container-hub-repl”

Replication Mode: click “Pull-based”

Source registry: select “dpat-container-hub”

Flattening: select “Flatten All Levels”

Trigger Mode: select “Scheduled”

Cron String: input “0 0 */2 * * *” (This means that the replication will be executed every 2 hours automatically)

Then click “SAVE” to submit the new replication rule

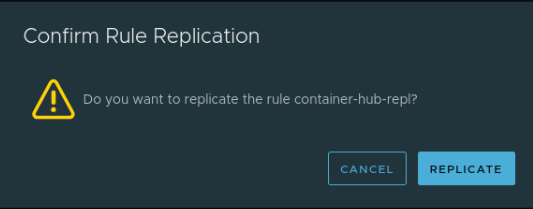

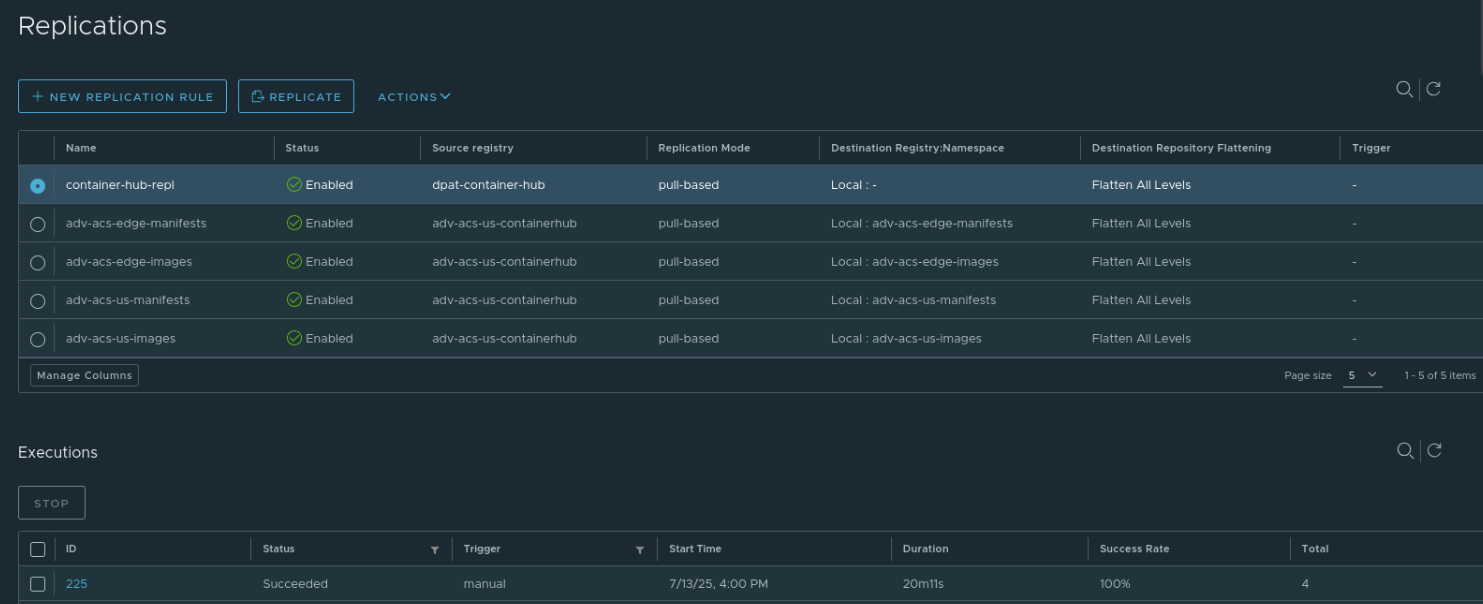

Replicate Container Hub manually

Wait about 20 minutes for completing replication

You can confirm in “Executions” section of page, the “status” is Succeeded, the “Success Rate” is 100%

Now the Container Hub images are replicated to Unified Server.

Note that: If you have modified app code and push new docker image to Container Hub, you need to manually trigger replication again from the “Replications” page or wait for the automatic replication done.

Transfer demo program to each Host Controller#

Download the test program

Click to download the application-dpat-v3.1.0-RHEL74.tar.gz archive(a simple DPAT algorithm in Python) to your computer.

Transfer the file to the ~/apps directory on the Host Controller VM

Please refer to the “Transferring files” section of VM Management page.

In the VNC GUI, extract files in the bash console.

cd ~/apps/ tar -zxf application-dpat-v3.1.0-RHEL74.tar.gz

Create Docker image for dff-data-read app in the 2nd Host Controller#

Load the base image.

cd ~ wget -N http://10.44.5.139/docker/python39-basic20.tar.zip unzip python39-basic20.tar.zip sudo docker load -i python39-basic20.tar

Download dff-data-read app code, this app code implement is based on DPAT app

cd ~/apps/application-dpat-v3.1.0/ wget -N http://10.44.5.139/app/dff-data-read-v1.tar.gz tar zxf dff-data-read-v1.tar.gz

Log in with the org user account in order to push docker image

sudo docker login registry.advantest.com --username ChangeToUserName --password ChangeToSecret

Navigate to the dff-data-read app directory(~/apps/application-dpat-v3.1.0/dff-data-read-v1), you can find these files:

Dockerfile: this is used for building dff-data-read app docker image

Click to expand!

FROM registry.advantest.com/adv-dpat/python39-basic:2.0 RUN yum install -y openssh-server RUN mkdir /var/run/sshd RUN echo 'root:root123' | chpasswd RUN sed -i 's/PermitRootLogin prohibit-password/PermitRootLogin yes/' /etc/ssh/sshd_config RUN sed 's@session\s*required\s*pam_loginuid.so@session optional pam_loginuid.so@g' -i /etc/pam.d/sshd EXPOSE 22 # set nexus env variables WORKDIR / RUN python3.9 -m pip install pandas jsonschema # copy run files and directories RUN mkdir -p /dpat-app;chmod a+rwx /dpat-app RUN mkdir -p /dpat-app/data;chmod a+rwx /dpat-app/data RUN touch dpat-app/__init__.py COPY workdir /dpat-app/workdir COPY conf /dpat-app/conf # RUN yum -y install libunwind-devel RUN echo 'export $(cat /proc/1/environ |tr "\0" "\n" | xargs)' >> /etc/profile ENV LOG_FILE_PATH "/tmp/app.log" ENV DFF_FLAG="False" WORKDIR /dpat-app/workdir RUN /usr/bin/ssh-keygen -A RUN echo "python3.9 -u /dpat-app/workdir/run_dpat.py&" >> /start.sh RUN echo "/usr/sbin/sshd -D" >> /start.sh CMD sh /start.sh

workdir/run_dpat.py: This is the main entry point for the dff-data-read app

Click to expand!

""" Copyright 2023 ADVANTEST CORPORATION. All rights reserved Dynamic Part Average Testing This module allows the user to calculate new limits based on dynamic part average testing. It is assumed that this is being run on the docker container with the Nexus connection. """ import json from AdvantestLogging import logger from dpat import DPAT from oneapi import OneAPI, send_command def run(nexus_data, args, save_result_fn): """Callback function that will be called upon receiving data from OneAPI Args: nexus_data: Datalog coming from nexus args: Arguments set by user """ base_limits = args["baseLimits"] dpat = args["DPAT"] # persistent class # compute dpat to get new high/low limits dpat.compute_once(nexus_data, base_limits, logger, args) results_df, new_limits = dpat.datalog() # logger.info(new_limits) if args["VariableControl"] == True: # Send dpat computed data back to Host Controller Nexus, the Smartest will receive the data from Nexus send_command(new_limits, "VariableControl") save_result_fn(results_df) def main(): """Set logger and call OneAPI""" logger.info("Starting DPAT datacolection and computation") logger.info("Copyright 2022 - Advantest America Inc") with open("data/base_limits.json", encoding="utf-8") as json_file: base_limits = json.load(json_file) args = { "DPAT": DPAT(), "baseLimits": base_limits, "config_path": "../conf/test_suites.ini", "setPathStorage": "data", "setPrefixStorage": "Demo_Dpat_123456", "saveStat": True, "VariableControl": False, # Note: smt version is not visible from run_dpat, so it is set in sample.py in consumeLotStart } logger.info("Starting OneAPI") """ Create a OneAPI instance to receive Smartest data from Host Controller Nexus, the callback function "run" will be called when data received with the parameter in the "args" """ oneapi = OneAPI(callback_fn=run, callback_args=args) oneapi.start() if __name__ == "__main__": main()

workdir/oneapi.py:

By using OneAPI, this code performs the following functions:

Retrieves test data, DFF data read action from the Host Controller and sends it to the DPAT algorithm or read DFF from AUS.

Receives the DPAT results from the algorithm, DFF from AUS and sends them back to the Host Controller.

Click to expand!

from liboneAPI import Interface from liboneAPI import AppInfo from sample import SampleMonitor from sample import sendCommand import signal import sys import pandas as pd from AdvantestLogging import logger def send_command(result_str, command): # name = command; # param = "DriverEvent LotStart" # sendCommand(name, param) name = command param = "DriverEvent LotStart" sendCommand(name, param) name = command param = "Config Enabled=1 Timeout=10" sendCommand(name, param) name = command param = "Set " + result_str sendCommand(name, param) logger.info(result_str) class OneAPI: def __init__(self, callback_fn, callback_args): self.callback_args = callback_args self.get_suite_config(callback_args["config_path"]) self.monitor = SampleMonitor( callback_fn=callback_fn, callback_args=self.callback_args ) def get_suite_config(self, config_path): # conf/test_suites.ini test_suites = [] with open(config_path) as f: for line in f: li = line.strip() if not li.startswith("#"): test_suites.append(li.split(",")) self.callback_args["test_suites"] = pd.DataFrame( test_suites, columns=["testNumber", "testName"] ) def start( self, ): signal.signal(signal.SIGINT, quit) logger.info("Press 'Ctrl + C' to exit") me = AppInfo() me.name = "sample" me.vendor = "adv" me.version = "2.1.0" Interface.registerMonitor(self.monitor) NexusDataEnabled = True # whether to enable Nexus Data Streaming and Control TPServiceEnabled = ( True # whether to enable TPService for communication with NexusTPI ) res = Interface.connect(me, NexusDataEnabled, TPServiceEnabled) if res != 0: logger.info(f"Connect fail. code = {res}") sys.exit() logger.info("Connect succeed.") signal.signal(signal.SIGINT, quit) while True: signal.pause() def quit( self, ): res = Interface.disconnect() if res != 0: logger.info(f"Disconnect fail. code = {res}") sys.exit()

workdir/sample.py, In this file:

the “dff_query” method is used for “DFF read” from Unified Server

the action “Get_DFF_Data” in “consumeTPRequest” method is used for indentifying DFF data read request from 1nd Host Controller

Click to expand!

from liboneAPI import Monitor from liboneAPI import DataType from liboneAPI import TestCell from liboneAPI import Command from liboneAPI import Interface from liboneAPI import toHead from liboneAPI import toSite from liboneAPI import DFFData from liboneAPI import QueryResponse from AdvantestLogging import logger import os import sys import pandas as pd import json import re import threading import time def dff_query(condition: str) -> dict: dffData = {} query_res = QueryResponse() query_res = DFFData.createRawQueryRequest(condition) if query_res.code == DFFData.SUCCEED: jobID = query_res.result if query_res.code == DFFData.COMMON_ERROR: return dffData # error handling: response.errmsg while True: query_res = DFFData.getQueryTaskStatus(jobID) if query_res.code != DFFData.SUCCEED: return dffData # error handling: response.errmsg if query_res.result == "RUNNING": pass # need sleep a while then continue if query_res.result == "COMPLETED": query_res = DFFData.getQueryResult(jobID, "json") if query_res.code != DFFData.SUCCEED: pass # error handling: response.errmsg else : dffData = query_res.result break return dffData def sendCommand(cmd_name, cmd_param): logger.info(f"Command -- send {cmd_name}") tc = TestCell() cmd = Command() cmd.param = cmd_param cmd.name = cmd_name cmd.reason = f"test {cmd.name} command" res = Interface.sendCommand(tc, cmd) if res != 0: logger.info("Send command fail. code = ") class SampleMonitor(Monitor): def __init__(self, callback_fn, callback_args): Monitor.__init__(self) self.mTouchdownCnt = 0 self.result_test_suite = [] self.results_df = pd.DataFrame() self.callback_fn = callback_fn self.callback_args = callback_args self.callback_thread = None # To keep track of the thread self.test_suites = callback_args["test_suites"] self.ecid = None self.time_nanosec = 0 self.stime_nanosec = 0 self.ratime_nanosec = 0 self.atime_nanosec = 0 self.dff_flag = os.environ.get("DFF_FLAG", "false").lower() in ('true', 't') self.dff_dict = {} self.dff_skip_ls = [] def consumeLotStart(self, data): logger.info(sys._getframe().f_code.co_name) self.mTouchdownCnt = 0 # Get SmarTest version smt_ver_info = data.get_TesterosVersion() print("VERSION:" + str(data.get_TesterosVersion())) self._smt_os_version = re.search(r"\d+", smt_ver_info).group() print("VERSION:" + self._smt_os_version) self.lot_id = data.get_LotId() # logger.info(f"get_Timezone = {data.get_Timezone()}") # logger.info(f"get_SetupTime = {data.get_SetupTime()}") logger.debug(f"get_TimeStamp = {data.get_TimeStamp()}") # logger.info(f"get_StationNumber = {data.get_StationNumber()}") # logger.info(f"get_ModeCode = {data.get_ModeCode()}") # logger.info(f"get_RetestCode = {data.get_RetestCode()}") # logger.info(f"get_ProtectionCode = {data.get_ProtectionCode()}") # logger.info(f"get_BurnTimeMinutes = {data.get_BurnTimeMinutes()}") # logger.info(f"get_CommandCode = {data.get_CommandCode()}") logger.info(f"get_LotId = {data.get_LotId()}") # logger.info(f"get_PartType = {data.get_PartType()}") # logger.info(f"get_NodeName = {data.get_NodeName()}") # logger.info(f"get_TesterType = {data.get_TesterType()}") # logger.info(f"get_JobName = {data.get_JobName()}") # logger.info(f"get_JobRevision = {data.get_JobRevision()}") # logger.info(f"get_SublotId = {data.get_SublotId()}") # logger.info(f"get_OperatorName = {data.get_OperatorName()}") # logger.info(f"get_TesterosType = {data.get_TesterosType()}") # logger.info(f"get_TesterosVersion = {data.get_TesterosVersion()}") # logger.info(f"get_TestType = {data.get_TestType()}") # logger.info(f"get_TestStepCode = {data.get_TestStepCode()}") # logger.info(f"get_TestTemperature = {data.get_TestTemperature()}") # logger.info(f"get_UserText = {data.get_UserText()}") # logger.info(f"get_AuxiliaryFile = {data.get_AuxiliaryFile()}") # logger.info(f"get_PackageType = {data.get_PackageType()}") # logger.info(f"get_FamilyId = {data.get_FamilyId()}") # logger.info(f"get_DateCode = {data.get_DateCode()}") # logger.info(f"get_FacilityId = {data.get_FacilityId()}") # logger.info(f"get_FloorId = {data.get_FloorId()}") # logger.info(f"get_ProcessId = {data.get_ProcessId()}") # logger.info(f"get_OperationFreq = {data.get_OperationFreq()}") # logger.info(f"get_SpecName = {data.get_SpecName()}") # logger.info(f"get_SpecVersion = {data.get_SpecVersion()}") # logger.info(f"get_FlowId = {data.get_FlowId()}") # logger.info(f"get_SetupId = {data.get_SetupId()}") # logger.info(f"get_DesignRevision = {data.get_DesignRevision()}") # logger.info(f"get_EngineeringLotId = {data.get_EngineeringLotId()}") # logger.info(f"get_RomCode = {data.get_RomCode()}") # logger.info(f"get_SerialNumber = {data.get_SerialNumber()}") # logger.info(f"get_SupervisorName = {data.get_SupervisorName()}") # logger.info(f"get_HeadNumber = {data.get_HeadNumber()}") # logger.info(f"get_SiteGroupNumber = {data.get_SiteGroupNumber()}") # headsiteList = data.get_TotalHeadSiteList() # logger.info("get_TotalHeadSiteList:", end="") # for headsite in headsiteList: # logger.info(f" {headsite}", end="") # logger.info("") # logger.info(f"get_ProberHandlerType = {data.get_ProberHandlerType()}") # logger.info(f"get_ProberHandlerId = {data.get_ProberHandlerId()}") # logger.info(f"get_ProbecardType = {data.get_ProbecardType()}") # logger.info(f"get_ProbecardId = {data.get_ProbecardId()}") # logger.info(f"get_LoadboardType = {data.get_LoadboardType()}") # logger.info(f"get_LoadboardId = {data.get_LoadboardId()}") # logger.info(f"get_DibType = {data.get_DibType()}") # logger.info(f"get_DibId = {data.get_DibId()}") # logger.info(f"get_CableType = {data.get_CableType()}") # logger.info(f"get_CableId = {data.get_CableId()}") # logger.info(f"get_ContactorType = {data.get_ContactorType()}") # logger.info(f"get_ContactorId = {data.get_ContactorId()}") # logger.info(f"get_LaserType = {data.get_LaserType()}") # logger.info(f"get_LaserId = {data.get_LaserId()}") # logger.info(f"get_ExtraEquipType = {data.get_ExtraEquipType()}") # logger.info(f"get_ExtraEquipId = {data.get_ExtraEquipId()}") def consumeLotEnd(self, data): logger.info(sys._getframe().f_code.co_name) self.mTouchdownCnt = 0 logger.debug(f"get_TimeStamp = {data.get_TimeStamp()}") # logger.info(f"get_DisPositionCode = {data.get_DisPositionCode()}") # logger.info(f"get_UserDescription = {data.get_UserDescription()}") # logger.info(f"get_ExecDescription = {data.get_ExecDescription()}") def consumeWaferStart(self, data): logger.info(sys._getframe().f_code.co_name) self.mTouchdownCnt = 0 self.dff_dict = {} self.dff_skip_ls = [] logger.debug(f"get_TimeStamp = {data.get_TimeStamp()}") # logger.info(f"get_WaferSize = {data.get_WaferSize()}") # logger.info(f"get_DieHeight = {data.get_DieHeight()}") # logger.info(f"get_DieWidth = {data.get_DieWidth()}") # logger.info(f"get_WaferUnits = {data.get_WaferUnits()}") # logger.info(f"get_WaferFlat = {data.get_WaferFlat()}") # logger.info(f"get_CenterX = {data.get_CenterX()}") # logger.info(f"get_CenterY = {data.get_CenterY()}") # logger.info(f"get_PositiveX = {data.get_PositiveX()}") # logger.info(f"get_PositiveY = {data.get_PositiveY()}") # logger.info(f"get_HeadNumber = {data.get_HeadNumber()}") # logger.info(f"get_SiteGroupNumber = {data.get_SiteGroupNumber()}") # logger.info(f"get_WaferId = {data.get_WaferId()}") def consumeWaferEnd(self, data): logger.info(sys._getframe().f_code.co_name) self.mTouchdownCnt = 0 logger.debug(f"get_TimeStamp = {data.get_TimeStamp()}") # logger.info(f"get_HeadNumber = {data.get_HeadNumber()}") # logger.info(f"get_SiteGroupNumber = {data.get_SiteGroupNumber()}") # logger.info(f"get_TestedCount = {data.get_TestedCount()}") # logger.info(f"get_RetestedCount = {data.get_RetestedCount()}") # logger.info(f"get_GoodCount = {data.get_GoodCount()}") # logger.info(f"get_WaferId = {data.get_WaferId()}") # logger.info(f"get_UserDescription = {data.get_UserDescription()}") # logger.info(f"get_ExecDescription = {data.get_ExecDescription()}") def consumeTestStart(self, data): logger.info(sys._getframe().f_code.co_name) logger.debug(f"get_TimeStamp = {data.get_TimeStamp()}") # headsiteList = data.get_HeadSiteList() # logger.info("get_HeadSiteList:", end="") # for headsite in headsiteList: # logger.info(f" {liboneAPI.toHead(headsite)}|{liboneAPI.toSite(headsite)}", end="") # logger.info("") def consumeTestEnd(self, data): logger.info(sys._getframe().f_code.co_name) self.mTouchdownCnt += 1 logger.debug(f"get_TimeStamp = {data.get_TimeStamp()}") # cnt = data.get_ResultCount() # logger.info(f"get_ResultCount = {cnt}") # for index in range(0, cnt): # tempU32 = data.query_HeadSite(index) # logger.info(f"Head = {liboneAPI.toHead(tempU32)} Site = {liboneAPI.toSite(tempU32)}") # logger.info(f"query_PartFlag = {data.query_PartFlag(index)}") # logger.info(f"query_NumOfTest = {data.query_NumOfTest(index)}") # logger.info(f"query_SBinResult = {data.query_SBinResult(index)}") # logger.info(f"query_HBinResult = {data.query_HBinResult(index)}") # logger.info(f"query_XCoord = {data.query_XCoord(index)}") # logger.info(f"query_YCoord = {data.query_YCoord(index)}") # logger.info(f"query_TestTime = {data.query_TestTime(index)}") # logger.info(f"query_PartId = {data.query_PartId(index)}") # logger.info(f"query_PartText = {data.query_PartText(index)}") def consumeTestFlowStart(self, data): logger.info(sys._getframe().f_code.co_name) logger.debug(f"get_TimeStamp = {data.get_TimeStamp()}") # logger.info(f"get_TestFlowName = {data.get_TestFlowName()}") def consumeTestFlowEnd(self, data): logger.info(sys._getframe().f_code.co_name) logger.debug(f"get_TimeStamp = {data.get_TimeStamp()}") # logger.info(f"get_TestFlowName = {data.get_TestFlowName()}") def consumeParametricTest(self, data): logger.info(sys._getframe().f_code.co_name) cnt = data.get_ResultCount() # logger.info(f"get_ResultCount = {cnt}") for index in range(0, cnt): tempU32 = data.query_HeadSite(index) # logger.info(f"Head = {toHead(tempU32)} Site = {toSite(tempU32)}") # logger.info(f"query_TestNumber = {data.query_TestNumber(index)}") # logger.info(f"query_TestText = {data.query_TestText(index)}") # logger.info(f"query_LowLimit = {data.query_LowLimit(index)}") # logger.info(f"query_HighLimit = {data.query_HighLimit(index)}") # logger.info(f"query_Unit = {data.query_Unit(index)}") # logger.info(f"query_TestFlag = {data.query_TestFlag(index)}") # logger.info(f"query_Result = {data.query_Result(index)}") test_num = data.query_TestNumber(index) if self._smt_os_version == "8": # for smarTest8 test_name = data.query_TestSuite(index) else: test_name = data.query_TestText(index).split(":")[0] result = data.query_Result(index) row = [test_num, test_name, result] # use only specific test_names and test_numbers define in config file # if test_num in set(self.test_suites.testNumber) or test_name in set(self.test_suites.testName): self.result_test_suite.append(row) if self.result_test_suite: # set result test suite as a dataframe test_suite_df = pd.DataFrame(self.result_test_suite, columns=["TestId", "testName", "PinValue"]) # get previous lots data to calculate limit if self.dff_flag and test_num not in self.dff_skip_ls: DPAT_Samples = 5 # default value # get DPAT Sample from base limit if it exists try: if test_name in self.callback_args["baseLimits"].keys(): DPAT_Samples = int(self.callback_args["baseLimits"][test_name]["DPAT_Samples"]) except Exception as e: logger.error(f"Cannot retrieve the DPAT_Samples of {test_name}, {e}") # get previous data using DFF if n_sample is less than DPAT Sample if (test_suite_df["TestId"] == test_num).sum() < DPAT_Samples: # retrieve previous data if it hasn't been fetched before if test_num not in self.dff_dict.keys(): dff_data_ls:list = self.consumeDFFData(test_num) self.dff_dict[test_num] = dff_data_ls dff_data:list = self.dff_dict[test_num] # concatenate previous data to test_suite_df if data is not empty if dff_data: # skip the test number if dff data has already been retrieved self.dff_skip_ls.append(test_num) # append the previous data to result test suite for result in dff_data: self.result_test_suite.append([test_num, test_name, result]) # concatenate previous data df = pd.DataFrame({ "TestId": [test_num] * len(dff_data), "testName": [test_name] * len(dff_data), "PinValue": dff_data }) test_suite_df = pd.concat([test_suite_df, df], axis=0) # execute DPAT using test_suite_df self.callback_thread = self.threaded_callback( self.callback_fn, test_suite_df, self.callback_args ) def consumeFunctionalTest(self, data): logger.info(sys._getframe().f_code.co_name) # cnt = data.get_ResultCount() # logger.info(f"get_ResultCount = {cnt}") # for index in range(0, cnt): # tempU32 = data.query_HeadSite(index) # logger.info(f"Head = {liboneAPI.toHead(tempU32)} Site = {liboneAPI.toSite(tempU32)}") # logger.info(f"query_TestNumber = {data.query_TestNumber(index)}") # logger.info(f"query_TestText = {data.query_TestText(index)}") # logger.info(f"query_TestFlag = {data.query_TestFlag(index)}") # logger.info(f"query_CycleCount = {data.query_CycleCount(index)}") # logger.info(f"query_NumberFail = {data.query_NumberFail(index)}") # failpins = data.query_FailPins(index) # logger.info("query_FailPins:", end="") # for p in failpins: # logger.info(f" {p}", end="") # logger.info("") # logger.info(f"query_VectNam = {data.query_VectNam(index)}") def consumeMultiParametric(self, data): logger.info(sys._getframe().f_code.co_name) # cnt = data.get_ResultCount() # logger.info(f"get_ResultCount = {cnt}") # for index in range(0, cnt): # tempU32 = data.query_HeadSite(index) # logger.info(f"Head = {liboneAPI.toHead(tempU32)} Site = {liboneAPI.toSite(tempU32)}") # logger.info(f"query_TestNumber = {data.query_TestNumber(index)}") # logger.info(f"query_TestText = {data.query_TestText(index)}") # logger.info(f"query_LowLimit = {data.query_LowLimit(index)}") # logger.info(f"query_HighLimit = {data.query_HighLimit(index)}") # logger.info(f"query_Unit = {data.query_Unit(index)}") # logger.info(f"query_TestFlag = {data.query_TestFlag(index)}") # results = data.query_Results(index) # logger.info(f"query_Results: Cnt({len(results)})", end="") # for r in results: # logger.info(f" {r}", end="") # logger.info("") def consumeDeviceData(self, data): logger.info(sys._getframe().f_code.co_name) # logger.info(f"get_TestProgramDir = {data.get_TestProgramDir()}") # logger.info(f"get_TestProgramName = {data.get_TestProgramName()}") # logger.info(f"get_TestProgramPath = {data.get_TestProgramPath()}") # logger.info(f"get_PinConfig = {data.get_PinConfig()}") # logger.info(f"get_ChannelAttribute = {data.get_ChannelAttribute()}") # cnt = data.get_BinInfoCount() # logger.info(f"get_BinInfoCount = {cnt}") # for index in range(0, cnt): # logger.info(f"SBin({data.query_SBinNumber(index)})", end="") # logger.info(f" Name({data.query_SBinName(index)})", end="") # logger.info(f" Type({data.query_SBinType(index)})", end="") # logger.info(f" HBin({data.query_HBinNumber(index)})", end="") # logger.info(f" Name({data.query_HBinName(index)})", end="") # logger.info(f" Type({data.query_HBinType(index)})", end="") # logger.info("") def consumeUserDefinedData(self, data): logger.info(sys._getframe().f_code.co_name) # userDefined = data.get_UserDefined() # for key,value in userDefined.items(): # logger.info(f"[{key}] = {value}") def consumeFile(self, data): logger.info(sys._getframe().f_code.co_name) # logger.info(f"get_FileName = {data.get_FileName()}") def consumeDatalogText(self, data): logger.info(sys._getframe().f_code.co_name) logger.debug(f"get_TimeStamp = {data.get_TimeStamp()}") # logger.info(f"get_DataLogText = {data.get_DataLogText()}") def getCustomerDFFData(self, test_number: str) -> list: result:list = [] sql_query:str = f""" SELECT data FROM dff_debug_tpi_zqr2 ORDER BY timestamp::timestamp DESC LIMIT 1; """ dff_data:dict = dff_query(sql_query) # get previous result data if it's not empty if dff_data: parsed_data:dict = json.loads(dff_data) logger.info(f"getCustomerDFFData => parsed_data {parsed_data}") result = parsed_data[0]["data"] logger.info(len(parsed_data)) logger.info(f"Retrieve previous data from DFF, data = {result}") else: logger.info("There is no previous data available from DFF") logger.debug(f"The query is '{sql_query}'") return result def consumeDFFData(self, test_number: str) -> list: result:list = [] sql_query:str = f""" SELECT (SELECT json_agg(row_to_json(p)) FROM ( SELECT lot_id, x_coord, y_coord, result, low_limit, high_limit FROM ptr WHERE test_number = {test_number} AND lot_id = '{self.lot_id}' ) p ) AS ptr """ dff_data:dict = dff_query(sql_query) # get previous result data if it's not empty if dff_data: parsed_data:dict = json.loads(dff_data) result = [test["result"] for test in parsed_data[0]["ptr"]] logger.info(len(parsed_data)) # result:list = pd.DataFrame(parsed_data["ptr"])["result"].tolist() logger.info(f"Retrieve previous data from DFF, data = {result}") else: logger.info("There is no previous data available from DFF") logger.debug(f"The query is '{sql_query}'") return result def consumeData(self, tc, data): try: datatype = data.getType() if datatype == DataType.DATA_TYP_PRODUCTION_LOTSTART: self.consumeLotStart(data) elif datatype == DataType.DATA_TYP_PRODUCTION_LOTEND: if self.callback_thread: self.callback_thread.join() # Wait for the callback thread to finish self.consumeLotEnd(data) elif datatype == DataType.DATA_TYP_PRODUCTION_WAFERSTART: self.consumeWaferStart(data) elif datatype == DataType.DATA_TYP_PRODUCTION_WAFEREND: self.consumeWaferEnd(data) elif datatype == DataType.DATA_TYP_PRODUCTION_TESTSTART: self.consumeTestStart(data) elif datatype == DataType.DATA_TYP_PRODUCTION_TESTEND: self.consumeTestEnd(data) elif datatype == DataType.DATA_TYP_PRODUCTION_TESTFLOWSTART: self.consumeTestFlowStart(data) elif datatype == DataType.DATA_TYP_PRODUCTION_TESTFLOWEND: self.consumeTestFlowEnd(data) elif datatype == DataType.DATA_TYP_MEASURED_PARAMETRIC: self.consumeParametricTest(data) elif datatype == DataType.DATA_TYP_MEASURED_FUNCTIONAL: self.consumeFunctionalTest(data) elif datatype == DataType.DATA_TYP_MEASURED_MULTI_PARAM: self.consumeMultiParametric(data) elif datatype == DataType.DATA_TYP_DEVICE: self.consumeDeviceData(data) elif datatype == DataType.DATA_TYP_USERDEFINED: self.consumeUserDefinedData(data) elif datatype == DataType.DATA_TYP_FILE: self.consumeFile(data) elif datatype == DataType.DATA_TYP_DATALOGTEXT: self.consumeDatalogText(data) else: logger.error(f"Unknown data type: {datatype.name}, data number: {datatype.value}") except Exception as e: logger.error(f"Something went wrong while executing the data handler, dataType = {datatype.name}", exc_info=True) logger.error(f"Error message: {e}") def consumeTPSend(self, tc, data): self.time_nanosec = time.time_ns() logger.info(f"Received data from {tc.testerId}, length is {len(data)} ") logger.info(f"Received data from {tc.testerId}, string is {data} ") lastdata = data datastr = str(data) if "SKU_NAME" in data: jsonObj = json.loads(datastr) logger.info(datastr) for key in jsonObj: logger.debug(key, " : ", jsonObj[key]) logger.debug(f"TPRequest: dataval= {jsonObj[key]}") dataval = jsonObj[key] for subkey in dataval: logger.debug(f"subkey= {subkey}") logger.debug(f"subval= {jsonObj[key][subkey]}") self.ecid = jsonObj["SKU_NAME"]["MSFT_ECID"] logger.info(f"TPSend: {self.ecid=}") self.testname = jsonObj["SKU_NAME"]["testname"] logger.info(f"TPSend: {self.testname=}") # print("TPSend: hosttime= ",hosttime) packetstr = jsonObj["SKU_NAME"]["packetString"] logger.info(f"TPSend: {packetstr=}") rawvalue = jsonObj["SKU_NAME"]["RawValue"] logger.info(f"TPSend: {rawvalue=}") lolim = jsonObj["SKU_NAME"]["LowLim"] logger.info(f"TPSend: {lolim=}") hilim = jsonObj["SKU_NAME"]["HighLim"] logger.info(f"TPSend: {hilim=}") self.stime_nanosec = time.time_ns() return def consumeTPRequest(self, tc, request): logger.info(f"consumeTPRequest Start") logger.info(f"Received request from {tc.testerId}, command is {request}") # Parse the JSON request jsonObj = json.loads(request) action = jsonObj.get("request") # Initialize variables with example values (replace with actual values as needed) adjHBin = -1 self.ratime_nanosec = time.time_ns() if action == "health": print("TPRqst: processing health") response = "success" elif action == "CalcNewLimits" and self.ecid and "PassRangeLsl" in self.results_df: # Construct the dictionary with all the fields edge_min_value = self.results_df["PassRangeLsl"].iloc[-1] edge_max_value = self.results_df["PassRangeUsl"].iloc[-1] selfatime_nanosec = time.time_ns() response_dict = { "MSFT_ECID": self.ecid, "testname": self.testname, "AdjLoLim": str(edge_min_value), "AdjHiLim": str(edge_max_value), "AdjHBin": str(adjHBin), "SendAppTime": str(self.time_nanosec), "SendAppRetTime": str(self.stime_nanosec), "RqstAppTime": str(self.ratime_nanosec), "RqstAppRetTime": str(self.atime_nanosec), } # TODO: Clarify what each of these time requests mean. # Convert the dictionary to a JSON string using json.dumps response = json.dumps(response_dict) elif action == "SMT7_CalcNewLimits" and "PassRangeLsl" in self.results_df: # Copy and sort the results dataframe new_limits_df = self.results_df.copy() new_limits_df.sort_values(by="TestId").reset_index(drop=True, inplace=True) # Change the dataframe to JSON format result = [{str(row["TestId"]): [str(row["PassRangeLsl"]), str(row["PassRangeUsl"])]} for _, row in new_limits_df.iterrows()] response = json.dumps({"CalcResult": result}) elif action == "Get_DFF_Data": data_result = self.getCustomerDFFData(1) logger.info(f"Get_DFF_Data data_result is {data_result}") response = data_result else: response = json.dumps({"response": "empty or unsupported action"}) logger.info(f"Response: {response}") return response def threaded_callback(self, callback_fn, dataframe, callback_args): thread = threading.Thread( target=callback_fn, args=(dataframe, callback_args, self.save_result) ) thread.start() return thread def save_result(self, df): self.results_df = df

Set DFF_FLAG=”True” in Dockerfile to enable DFF feature

sed -i 's/DFF_FLAG=\"False\"/DFF_FLAG=\"True\"/g' ~/apps/application-dpat-v3.1.0/dff-data-read-v1/Dockerfile

Build image

cd ~/apps/application-dpat-v3.1.0/dff-data-read-v1 sudo docker build ./ --tag=registry.advantest.com/acsqa-dpat-gemini/dff-data-read:ExampleTag

Push image

sudo docker push registry.advantest.com/acsqa-dpat-gemini/dff-data-read:ExampleTag

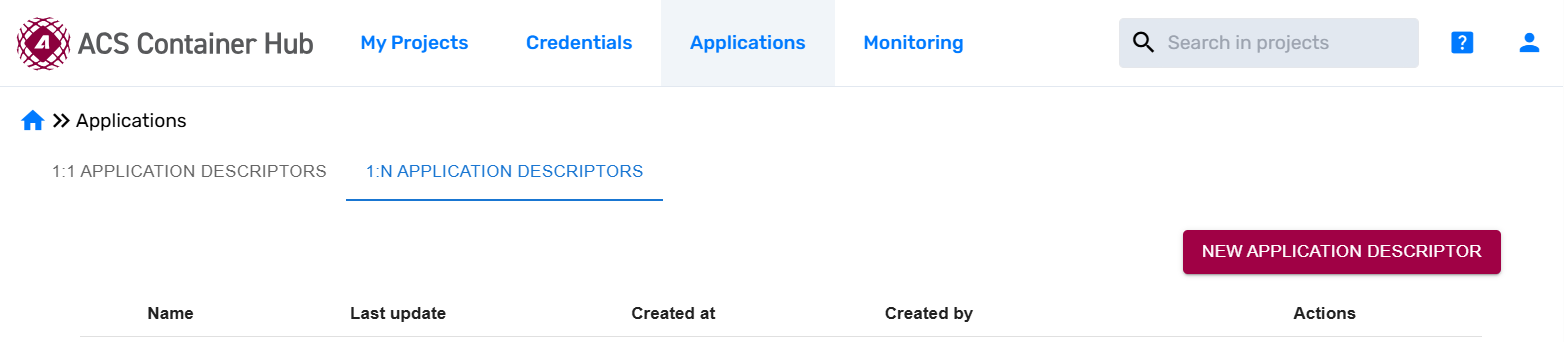

Create App descriptor project on the Container Hub#

Login to the ACS Container Hub using the org admin account.

Open “Applications” -> “1:N Application Descriptors” page, click “New Application Descriptor” button

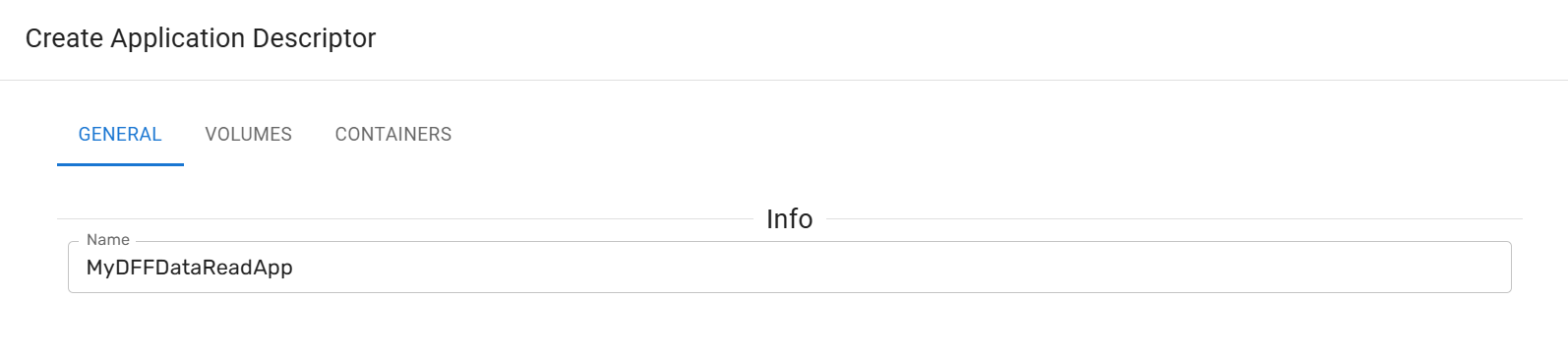

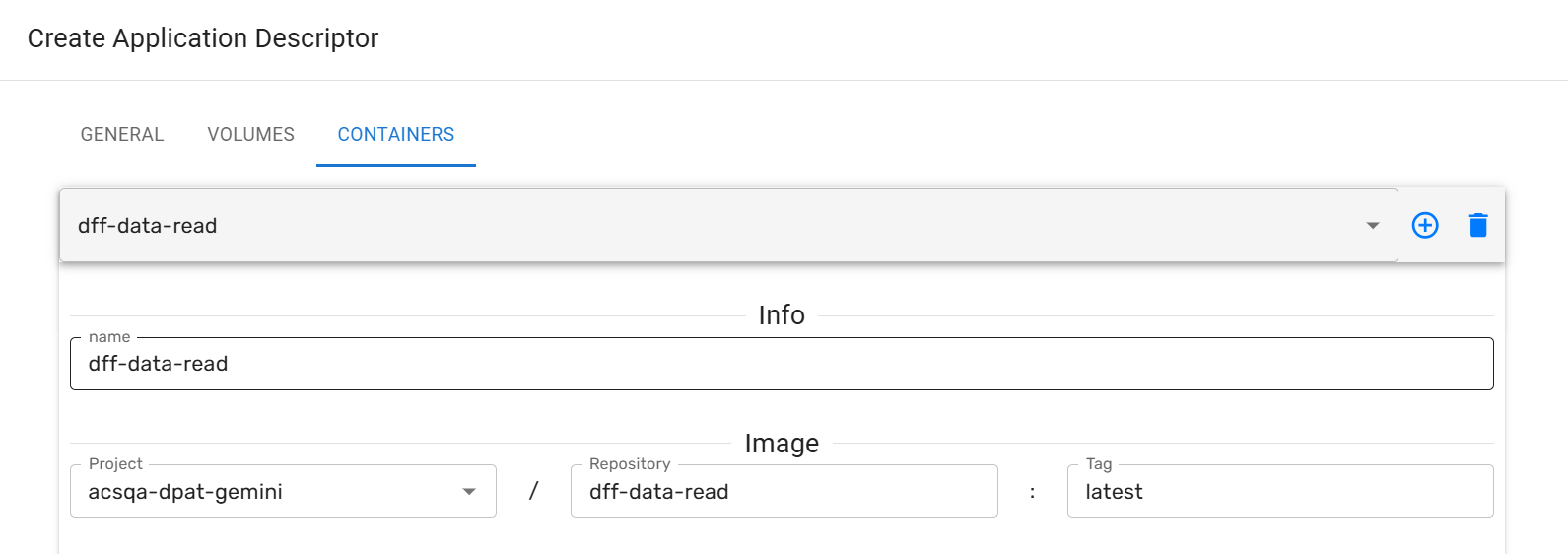

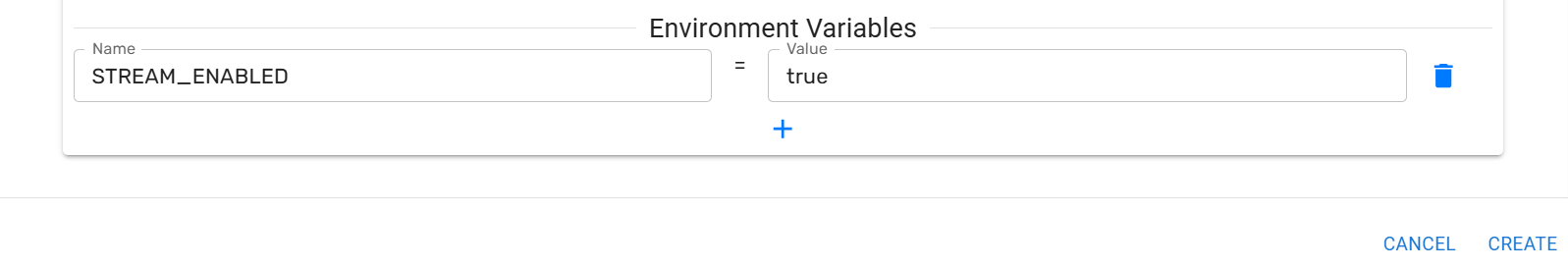

In the popped up “Create Application Descriptor” dialog, input/select these fields, then click “CREATE” button

GENERAL -> Name: MyDFFDataReadApp

CONTAINERS -> Info -> name: dff-data-read

CONTAINERS -> Image: acsqa-dpat-gemini/dff-data-read:ExampleTag

CONTAINERS -> Environment Variables:

STREAM_ENABLED=true

Replicate the new created App Descriptor to 2nd Unified Server on Habor#

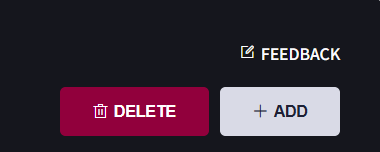

Submit feedback for DFF from AUS1 to AUS2 request.#

Click “FEEDBACK” on the right top of “Virtual Machine” page.

In the popped up “Feedback” dialog

add Summary

DFF from AUS1 to AUS2 request

add Description

Host Controller 1 name: XXXX private IP: XXX.XXX.XXX.XXX Unified Server 1 name: XXXX private IP: XXX.XXX.XXX.XXX Host Controller 2 name: XXXX private IP: XXX.XXX.XXX.XXX Unified Server 2 name: XXXX private IP: XXX.XXX.XXX.XXX Container hub org: XXXX App descriptor name: XXXX Contact me by: <email address>

Click “Submit”

Once the request is completed, the dff-data-read application will run on each Unified Server, and you can get the 2 AUS names “gemini-lab-advantest-cluster-xxxxxxxx”, these will be used in the DFF UI website.

Configure acs_nexus service in each Host Controller#

Edit /opt/acs/nexus/conf/acs_nexus.ini file.

Make sure the Auto_Deploy option is false

[Auto_Deploy] Enabled=false

Make sure the Enabled option is true in TestFloor_Server section, this will enable “DFF write” to Unified Server

[TestFloor_Server] Enabled=true Control_Port=5001 Data_Port=5002 Heartbeat_Cycle=10 ;; unit s

Restart acs_nexus service

sudo systemctl restart acs_nexus

Running the SmarTest Test Program on 1st Host Controller to generate test data and trigger DFF data transferring#

Start SmarTest8

Run the script that starts SmartTest8:

cd ~/apps/application-dpat-v3.1.0 sh start_smt8.sh

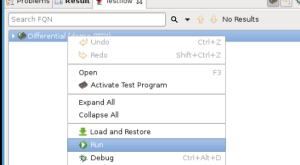

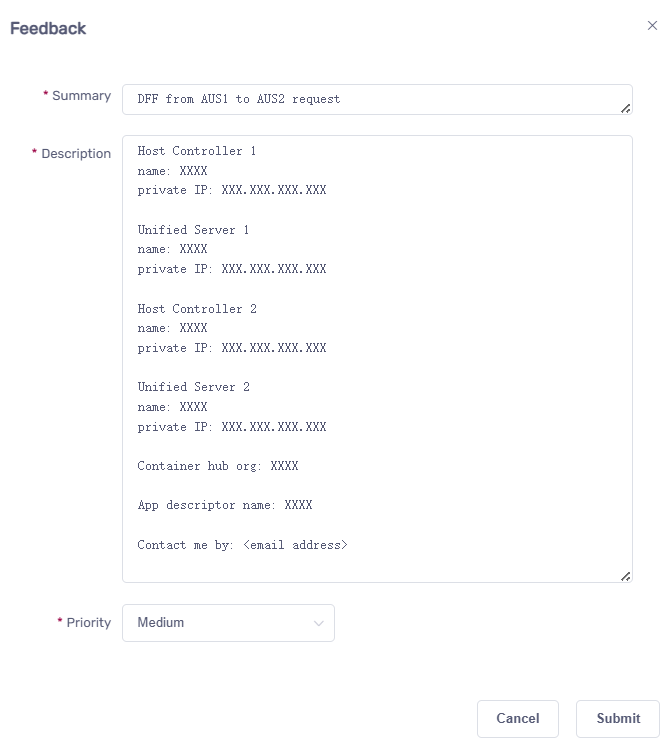

Activate Testflow:

To activate the Testflow, you’ll need to right-click on the Testflow entry at the bottom and then select “Activate…”:

Modify code to upload DFF data to 1st Unified Server.

Replace the file ./apps/application-dpat-v3.1.0/client/SMT8/testprogram/demo-RTDI/tml/misc/measuredValueFileReader.java and download DFF schema file schema_good.json with the following command:

cd ~ mv ./apps/application-dpat-v3.1.0/client/SMT8/testprogram/demo-RTDI/tml/misc/measuredValueFileReader.java ./apps/application-dpat-v3.1.0/client/SMT8/testprogram/demo-RTDI/tml/misc/measuredValueFileReader.java.old wget -N http://10.44.5.139/app/measuredValueFileReader_dffWrite-3.1.0.tar.gz tar zvxf measuredValueFileReader_dffWrite-3.1.0.tar.gz

In the new measuredValueFileReader.java file, you can find the code that imports schema file and upload DFF by TPI:

int result = NexusTPI.DFF.importSchema("./demo-RTDI/RTDI/schema_good.json"); System.out.println("NexusTPI importSchema result=" + result); if (NexusTPI.SUCCEED == result) { // Set default value NexusTPI.DFF.set("ecid", "DATA_IS_ECID_1_x1_y1") .set("filename","abcde.dff.json") .set("data_type","DFF") .set("timestamp", getTimeStamp()) .set("lot_id", "ABCDE.23") .set("program_name", "A5.A0.CP1.E.S.25C.1A.02") .set("site_num", 1) .set("test_stage", "FT") .set("schema_version", "1.0.0"); // Construct DFF data JSONObject json = new JSONObject(); json.put("lot_id", ""); json.put("wafer_id", "None"); json.put("part_id", "2"); json.put("x_coord", "-32768"); json.put("y_coord", "-32768"); json.put("part_text", ""); json.put("test_number", "50105902"); json.put("site_number", "2"); json.put("head_number", "1"); json.put("test_flag", "00"); json.put("result", "55.9567985535"); json.put("test_text", "Parametric"); json.put("low_limit", "40.0"); json.put("high_limit", "70.0"); json.put("units", "V"); json.put("param_flag", "00"); json.put("alarm_id", ""); json.put("optional_flag", ""); json.put("result_scaling", "0"); json.put("lowlimit_scaling", "0"); json.put("highlimit_scaling", "0"); json.put("result_format", ""); json.put("low_limit_format", ""); json.put("high_limit_format", ""); json.put("low_spec", "0.0"); json.put("high_spec", "0.0"); json.put("test_suite", "Main.RXGainTestSuite"); json.put("measurement_name", ""); json.put("save_at", "1758001024067"); result = NexusTPI.DFF.upload(json.toString()); switch(result) { case NexusTPI.DFF_CONNECTED: System.out.println("NexusTPI Upload DFF: Start to upload DFF data."); NexusTPI.DFF.regUploadCallback(measuredValueFileReader::onUploadComplete); break; case NexusTPI.DFF_CONNECT_FAIL: System.out.println("NexusTPI Upload DFF: Failed to establish data-upload connection."); break; case NexusTPI.DFF_DATA_EMPTY: System.out.println("NexusTPI Upload DFF: Data is empty."); break; case NexusTPI.DFF_PROPERTY_INVALID: System.out.println("NexusTPI Upload DFF: Properties invalid to schema."); break; default: break; } }

The completed code:

Click to expand!

/**

*

*/

package misc;

import java.time.LocalDateTime;

import java.time.format.DateTimeFormatter;

import java.util.List;

import java.util.Random;

import org.json.JSONObject;

import base.SOCTestBase;

import nexus.tpi.NexusTPI;

import shifter.GlobVars;

import xoc.dta.annotations.In;

import xoc.dta.datalog.IDatalog;

import xoc.dta.datatypes.MultiSiteDouble;

import xoc.dta.measurement.IMeasurement;

import xoc.dta.resultaccess.IPassFail;

import xoc.dta.testdescriptor.IFunctionalTestDescriptor;

import xoc.dta.testdescriptor.IParametricTestDescriptor;

/**

* ACS Variable control and JSON write demo using SMT8 for Washington

*/

@SuppressWarnings("unused")

public class measuredValueFileReader extends SOCTestBase {

public IMeasurement measurement;

public IParametricTestDescriptor pTD;

public IFunctionalTestDescriptor fTD;

public IFunctionalTestDescriptor testDescriptor;

class params {

private String _upper;

private String _lower;

private String _unit;

public params(String _upper, String lower, String _unit) {

super();

this._upper = _upper;

this._lower = lower;

this._unit = _unit;

}

}

@In

public String testName;

String testtext = "";

String powerResult = "";

int hb_value;

double edge_min_value = 0.0;

double edge_max_value = 0.0;

double prev_edge_min_value = 0.0;

double prev_edge_max_value = 0.0;

boolean c0Flag = false;

boolean c1Flag = false;

boolean dffFlag = true;

String[] ecid_strs = {"U6A629_03_x1_y2", "U6A629_03_x1_y3", "U6A629_03_x1_y4", "U6A629_03_x2_y1", "U6A629_03_x2_y2", "U6A629_03_x2_y6", "U6A629_03_x2_y7", "U6A629_03_x3_y10", "U6A629_03_x3_y2", "U6A629_03_x3_y3", "U6A629_03_x3_y7", "U6A629_03_x3_y8", "U6A629_03_x3_y9", "U6A629_03_x4_y11", "U6A629_03_x4_y1", "U6A629_03_x4_y2", "U6A629_03_x4_y4", "U6A629_03_x5_y0", "U6A629_03_x5_y10", "U6A629_03_x5_y11", "U6A629_03_x5_y3", "U6A629_03_x5_y7", "U6A629_03_x5_y8", "U6A629_03_x6_y3", "U6A629_03_x6_y5", "U6A629_03_x6_y6", "U6A629_03_x6_y7", "U6A629_03_x7_y4", "U6A629_03_x7_y7", "U6A629_03_x8_y2", "U6A629_03_x8_y3", "U6A629_03_x8_y5", "U6A629_03_x8_y7", "U6A629_04_x1_y2", "U6A629_04_x2_y2", "U6A629_04_x2_y3", "U6A629_04_x2_y7", "U6A629_04_x2_y8", "U6A629_04_x2_y9", "U6A629_04_x3_y10", "U6A629_04_x3_y2", "U6A629_04_x3_y3", "U6A629_04_x3_y4", "U6A629_04_x3_y5", "U6A629_04_x3_y7", "U6A629_04_x3_y9", "U6A629_04_x4_y11", "U6A629_04_x4_y2", "U6A629_04_x4_y7", "U6A629_04_x4_y8", "U6A629_04_x4_y9", "U6A629_04_x5_y4", "U6A629_04_x5_y7", "U6A629_04_x6_y5", "U6A629_04_x6_y6", "U6A629_04_x6_y8", "U6A629_04_x7_y10", "U6A629_05_x2_y2", "U6A629_05_x3_y0", "U6A629_05_x4_y1", "U6A629_05_x4_y2", "U6A629_05_x5_y1", "U6A629_05_x5_y2", "U6A629_05_x5_y3", "U6A629_05_x6_y1", "U6A629_05_x6_y3", "U6A629_05_x7_y2", "U6A629_05_x7_y3", "U6A629_05_x8_y3", "U6A633_10_x4_y9", "U6A633_10_x8_y9", "U6A633_11_x2_y2", "U6A633_11_x3_y9", "U6A633_11_x5_y2", "U6A633_11_x6_y10", "U6A633_11_x6_y5", "U6A633_11_x7_y9", "U6A633_11_x8_y8", "U6A633_11_x8_y9", "U6A633_12_x2_y3", "U6A633_12_x2_y5", "U6A633_12_x2_y7", "U6A633_12_x2_y9", "U6A633_12_x3_y1", "U6A633_12_x3_y9", "U6A633_12_x4_y0", "U6A633_12_x5_y1", "U6A633_12_x7_y4"};

int ecid_ptr = 0;

long hnanosec = 0;

List<Double> current_values;

protected IPassFail digResult;

public String pinList;

@Override

public void update() {

/* Overwrite limits provided by the test table */

// pTD.setLowLimit(0.9).setHighLimit(1.1).setTestText("ParametricRXGain").setTestNumber(401);

}

private static String getTimeStamp() {

LocalDateTime now = LocalDateTime.now();

DateTimeFormatter formatter = DateTimeFormatter.ofPattern("yyyy-MM-dd HH:mm:ss");

return now.format(formatter);

}

private static String getLargeString(int size) {

StringBuilder sb = new StringBuilder(size);

for (int i = 0; i < size; i++) {

sb.append('x');

}

return sb.toString();

}

public static void onUploadComplete(int result, String properties, String data) {

System.out.println("onUploadComplete: result: " + result);

System.out.println("onUploadComplete: properties: " + properties);

if(data.length() < 100) {

System.out.println("onUploadComplete: data: " + data);

} else {

System.out.println("onUploadComplete: data length: " + data.length());

}

}

@Override

public void setup() {

int initRes = NexusTPI.init();

System.out.println("NexusTPI init res:"+initRes);

}

@SuppressWarnings("static-access")

@Override

public void execute() {

int resh;

int resn;

int resx;

IDatalog datalog = context.datalog(); // use in case logDTR does not work

int index = 0;

Double raw_value = 0.0;

String rvalue = "";

String low_limitStr = "";

String high_limitStr = "";

Double low_limit = 0.0;

Double high_limit = 0.0;

String unit = "";

String packetValStr = "";

String sku_name = "";

String command = "";

boolean validResponse = false;

/**

* This portion of the code will read an external datalog file in XML and compare data in

* index 0 to the limit variables received from Nexus

*/

if (testName.contains("RX_gain_2412_C0_I[1]")) {

try {

int result = NexusTPI.DFF.importSchema("./demo-RTDI/RTDI/schema_good.json");

System.out.println("NexusTPI importSchema result=" + result);

if (NexusTPI.SUCCEED == result) {

// Set default value

NexusTPI.DFF.set("ecid", "DATA_IS_ECID_1_x1_y1")

.set("filename","abcde.dff.json")

.set("data_type","DFF")

.set("timestamp", getTimeStamp())

.set("lot_id", "ABCDE.23")

.set("program_name", "A5.A0.CP1.E.S.25C.1A.02")

.set("site_num", 1)

.set("test_stage", "FT")

.set("schema_version", "1.0.0");

// Construct DFF data

JSONObject json = new JSONObject();

json.put("lot_id", "");

json.put("wafer_id", "None");

json.put("part_id", "2");

json.put("x_coord", "-32768");

json.put("y_coord", "-32768");

json.put("part_text", "");

json.put("test_number", "50105902");

json.put("site_number", "2");

json.put("head_number", "1");

json.put("test_flag", "00");

json.put("result", "55.9567985535");

json.put("test_text", "Parametric");

json.put("low_limit", "40.0");

json.put("high_limit", "70.0");

json.put("units", "V");

json.put("param_flag", "00");

json.put("alarm_id", "");

json.put("optional_flag", "");

json.put("result_scaling", "0");

json.put("lowlimit_scaling", "0");

json.put("highlimit_scaling", "0");

json.put("result_format", "");

json.put("low_limit_format", "");

json.put("high_limit_format", "");

json.put("low_spec", "0.0");

json.put("high_spec", "0.0");

json.put("test_suite", "Main.RXGainTestSuite");

json.put("measurement_name", "");

json.put("save_at", "1758001024067");

result = NexusTPI.DFF.upload(json.toString());

switch(result) {

case NexusTPI.DFF_CONNECTED:

System.out.println("NexusTPI Upload DFF: Start to upload DFF data.");

NexusTPI.DFF.regUploadCallback(measuredValueFileReader::onUploadComplete);

break;

case NexusTPI.DFF_CONNECT_FAIL:

System.out.println("NexusTPI Upload DFF: Failed to establish data-upload connection.");

break;

case NexusTPI.DFF_DATA_EMPTY:

System.out.println("NexusTPI Upload DFF: Data is empty.");

break;

case NexusTPI.DFF_PROPERTY_INVALID:

System.out.println("NexusTPI Upload DFF: Properties invalid to schema.");

break;

default:

break;

}

}

} catch (Exception e) {

e.printStackTrace();

throw e;

}

}

if ((testName.contains("RX_gain_2412_C0_I[1]")) && (!c0Flag)) {

try {

misc.XMLParserforACS.params key = GlobVars.c0_current_params.get(testName);

} catch (Exception e) {

System.out.println("Missing entry in maps for this testname : " + testName);

return;

}

try {

GlobVars.c0_current_values = new XMLParserforACS().parseXMLandReturnRawValues(testName);

} catch (NullPointerException e) {

System.out.print("variable has null value, exiting.\n");

}

try {

GlobVars.c0_current_params = new XMLParserforACS().parseXMLandReturnLimitmap(testName);

} catch (NullPointerException e) {

System.out.print("variable has null value, exiting.\n");

}

c0Flag = true;

low_limit = Double.parseDouble(GlobVars.c0_current_params.get(testName).getLower());

high_limit = Double.parseDouble(GlobVars.c0_current_params.get(testName).getUpper());

unit = GlobVars.c0_current_params.get(testName).getUnit();

GlobVars.orig_LoLim = low_limit;

GlobVars.orig_HiLim = high_limit;

}

if ((testName.contains("RX_gain_2412_C1_I[1]")) && (!c1Flag)) {

try {

misc.XMLParserforACS.params key = GlobVars.c1_current_params.get(testName);

} catch (Exception e) {

System.out.println("Missing entry in maps for this testname : " + testName);

return;

}

try {

GlobVars.c1_current_values = new XMLParserforACS().parseXMLandReturnRawValues(testName);

} catch (NullPointerException e) {

System.out.print("variable has null value, exiting.\n");

}

try {

GlobVars.c1_current_params = new XMLParserforACS().parseXMLandReturnLimitmap(testName);

} catch (NullPointerException e) {

System.out.print("variable has null value, exiting.\n");

}

c1Flag = true;

low_limit = Double.parseDouble(GlobVars.c1_current_params.get(testName).getLower());

high_limit = Double.parseDouble(GlobVars.c1_current_params.get(testName).getUpper());

unit = GlobVars.c1_current_params.get(testName).getUnit();

}

if (testName.contains("RX_gain_2412_C1_I[1]")) {

index = GlobVars.c1_index;

raw_value = GlobVars.c1_current_values.get(index);

low_limit = Double.parseDouble(GlobVars.c1_current_params.get(testName).getLower());

high_limit = Double.parseDouble(GlobVars.c1_current_params.get(testName).getUpper());

unit = GlobVars.c1_current_params.get(testName).getUnit();

}

if (testName.contains("RX_gain_2412_C0_I[1]")) {

index = GlobVars.c0_index;

raw_value = GlobVars.c0_current_values.get(index);

low_limit = Double.parseDouble(GlobVars.c0_current_params.get(testName).getLower());

high_limit = Double.parseDouble(GlobVars.c0_current_params.get(testName).getUpper());

unit = GlobVars.c0_current_params.get(testName).getUnit();

}

if (testName.contains("RX_gain_2412_C0_I[1]")) {

}

if (validResponse && (testName.contains("RX_gain_2412_C0_I[1]")) && (!GlobVars.limFlag)) {

low_limit = Double.parseDouble(GlobVars.adjlolimStr);

high_limit = Double.parseDouble(GlobVars.adjhilimStr);

}

System.out.println(testName + ": TestingText= " + testtext);

System.out.println(testName + ": low_limit= " + low_limit);

System.out.println(testName + ": high_limit= " + high_limit);

System.out.println("Simulated Test Value Data ");

System.out.println(

"testname = " + testName + " lower = " + low_limit + " upper = " + high_limit

+ " units = " + unit + " raw_value = " + raw_value + " index = " + index);

MultiSiteDouble rawResult = new MultiSiteDouble();

for (int site : context.getActiveSites()) {

rawResult.set(site, raw_value);

}

/** This performs datalog limit evaluation and p/f result and EDL datalogging */

pTD.setHighLimit(high_limit);

pTD.setLowLimit(low_limit);

pTD.evaluate(rawResult);

if (testName.contains("RX_gain_2412_C0_I[1]")) {

if (GlobVars.c0_index < (GlobVars.c0_current_values.size() - 1)) {

GlobVars.c0_index++;

} else {

GlobVars.c0_index = 0;

}

}

if (testName.contains("RX_gain_2412_C1_I[1]")) {

if (GlobVars.c1_index < (GlobVars.c1_current_values.size() - 1)) {

GlobVars.c1_index++;

} else {

GlobVars.c1_index = 0;

}

}

if (pTD.getPassFail().get()) {

System.out.println(

"Sim Value Test " + testName + "\n************ PASSED **************\n");

} else {

System.out.println(testName + "\n************ FAILED *****************\n");

}

if (testName.contains("RX_gain_2412_C0_I[1]")) {

// HTTP performance data: HealthCheck, POST, GET transactions

System.out.println("************ Nexus BiDir Performance Data ******************");

System.out.println("HealthCheck: Host to App Time= "+GlobVars.perf_times.get("Health_h-a_time"));

System.out.println("HealthCheck: App to Host Time= "+GlobVars.perf_times.get("Health_a-h_time"));

System.out.println("HealthCheck: Round-Trip Time= "+GlobVars.perf_times.get("Health_rtd_time"));

System.out.println("TPSend: Host to App Time= "+GlobVars.perf_times.get("Send_h-a_time"));

System.out.println("TPSend: App to Host Time= "+GlobVars.perf_times.get("Send_a-h_time"));

System.out.println("TPSend: Round-Trip Time= "+GlobVars.perf_times.get("Send_rtd_time"));

System.out.println("TPRequest: Host to App Time= "+GlobVars.perf_times.get("Request_h-a_time"));

System.out.println("TPRequest: App to Host Time= "+GlobVars.perf_times.get("Request_a-h_time"));

System.out.println("TPRequest: Round-Trip Time= "+GlobVars.perf_times.get("Request_rtd_time"));

System.out.println("*****************************************************");

}

// Clear large strings used for http post

packetValStr = "";

sku_name = "";

ecid_ptr++;

if (ecid_ptr >= ecid_strs.length) {

ecid_ptr = 0;

}

}

public static String parse(String responseBody) {

if (responseBody.contains("MSFT_ECID")) {

JSONObject results = new JSONObject(responseBody);

String ecid = results.getString("MSFT_ECID");

System.out.println("MSFT_ECID: " + ecid);

String testName = results.getString("testname");

System.out.println("testname: " + testName);

GlobVars.adjlolimStr = results.getString("AdjLoLim");

System.out.println("AdjLoLim: " + GlobVars.adjlolimStr);

GlobVars.adjhilimStr = results.getString("AdjHiLim");

System.out.println("AdjHiLim: " + GlobVars.adjhilimStr);

String sndappTime = results.getString("SendAppTime");

System.out.println("SendAppTime: " + sndappTime);

String sretappTime = results.getString("SendAppRetTime");

System.out.println("SendAppRetTime: " + sretappTime);

String rappTime = results.getString("RqstAppTime");

System.out.println("RqstAppTime: " + rappTime);

String rretappTime = results.getString("RqstAppRetTime");

System.out.println("RqstAppRetTime: " + rretappTime);

long atime = Long.parseLong(sndappTime);

long artime = Long.parseLong(sretappTime);

long ratime = Long.parseLong(rappTime);

long rartime = Long.parseLong(rretappTime);

double deltaSndTime = (atime - GlobVars.ssnanoSeconds)/1000000.0; // Delta time in msec

GlobVars.perf_times.put("Send_h-a_time", deltaSndTime);

System.out.println("Delta TPSend h-a app-host time: "+deltaSndTime+" msec");

double deltaSndRetTime = (GlobVars.senanoSeconds - artime)/1000000.0; // Delta time in msec

GlobVars.perf_times.put("Send_a-h_time", deltaSndRetTime);

System.out.println("Delta TPSend a-h time: "+deltaSndRetTime+" msec");

double sendDeltaTime = (GlobVars.senanoSeconds - GlobVars.ssnanoSeconds)/1000000.0; // Delta time in msec

GlobVars.perf_times.put("Send_rtd_time", sendDeltaTime);

System.out.println("Delta TPSend transaction time: "+sendDeltaTime+" msec");

double deltaRqstTime = (ratime - GlobVars.rsnanoSeconds)/1000000.0; // Delta time in msec

GlobVars.perf_times.put("Request_h-a_time", deltaRqstTime);

System.out.println("Delta TPRequest h-a time: "+deltaRqstTime+" msec");

double deltaRqstRetTime = (GlobVars.renanoSeconds - rartime)/1000000.0; // Delta time in msec

GlobVars.perf_times.put("Request_a-h_time", deltaRqstRetTime);

System.out.println("Delta TPRequest a-h time: "+deltaRqstRetTime+" msec");

double gadeltaTime = (GlobVars.renanoSeconds - GlobVars.rsnanoSeconds)/1000000.0; // Delta time in msec

GlobVars.perf_times.put("Request_rtd_time", gadeltaTime);

}

return responseBody;

}

public static String hparse(String responseBody) {

String hatime = "0";

if (responseBody.contains("health")) {

JSONObject results = new JSONObject(responseBody);

hatime = results.getString("health");

long hatimeval = Long.parseLong(hatime);

System.out.println("health: " + hatime);

double hsdeltaTime = (hatimeval - GlobVars.hsnanoSeconds)/1000000.0; // Delta time in msec

double hrdeltaTime = (GlobVars.henanoSeconds - hatimeval)/1000000.0; // Delta time in msec

double rhdeltaTime = (GlobVars.henanoSeconds - GlobVars.hsnanoSeconds)/1000000.0; // Delta time in msec

GlobVars.perf_times.put("Health_h-a_time", hsdeltaTime);

GlobVars.perf_times.put("Health_a-h_time", hrdeltaTime);

GlobVars.perf_times.put("Health_rtd_time", rhdeltaTime);

System.out.println("Delta health h-a time: "+hsdeltaTime+" msec");

System.out.println("Delta health a-h time: "+hrdeltaTime+" msec");

System.out.println("Delta health rtd time: "+rhdeltaTime+" msec");

}

return hatime;

}

public String buildPacketString() {

int packetSel = GlobVars.packetSelector;

int[] cnt_values = { 203, 1000, 10000, 100000, 1000000,

2000000, 3000000, 4000000, 5000000,

6000000, 7000000, 8000000, 9000000,

10000000, 20000000, 40000000, 60000000, 80000000, 100000000,

120000000, 140000000, 160000000, 180000000, 200000000, 220000000,

240000000, 260000000, 280000000, 300000000};

System.out.println("PacketID: " + packetSel);

String pktStr = "";

int chrcnt = cnt_values[packetSel] - 200; // was 146;

System.out.println("Chrcnt: " + chrcnt);

String valstr = "";

String BUILDCHARS = "ABCDEFGHIJKLMNOPQRSTUVWXYZ1234567890";

StringBuilder packetstr = new StringBuilder();

Random rnd = new Random();

while (packetstr.length() < chrcnt) { // length of the random string.

int index = (int) (rnd.nextFloat() * BUILDCHARS.length());

packetstr.append(BUILDCHARS.charAt(index));

}

pktStr = packetstr.toString();

GlobVars.packetStr = pktStr;

return pktStr;

}

}

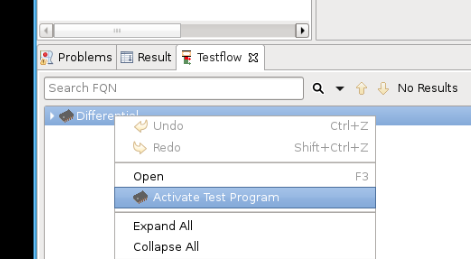

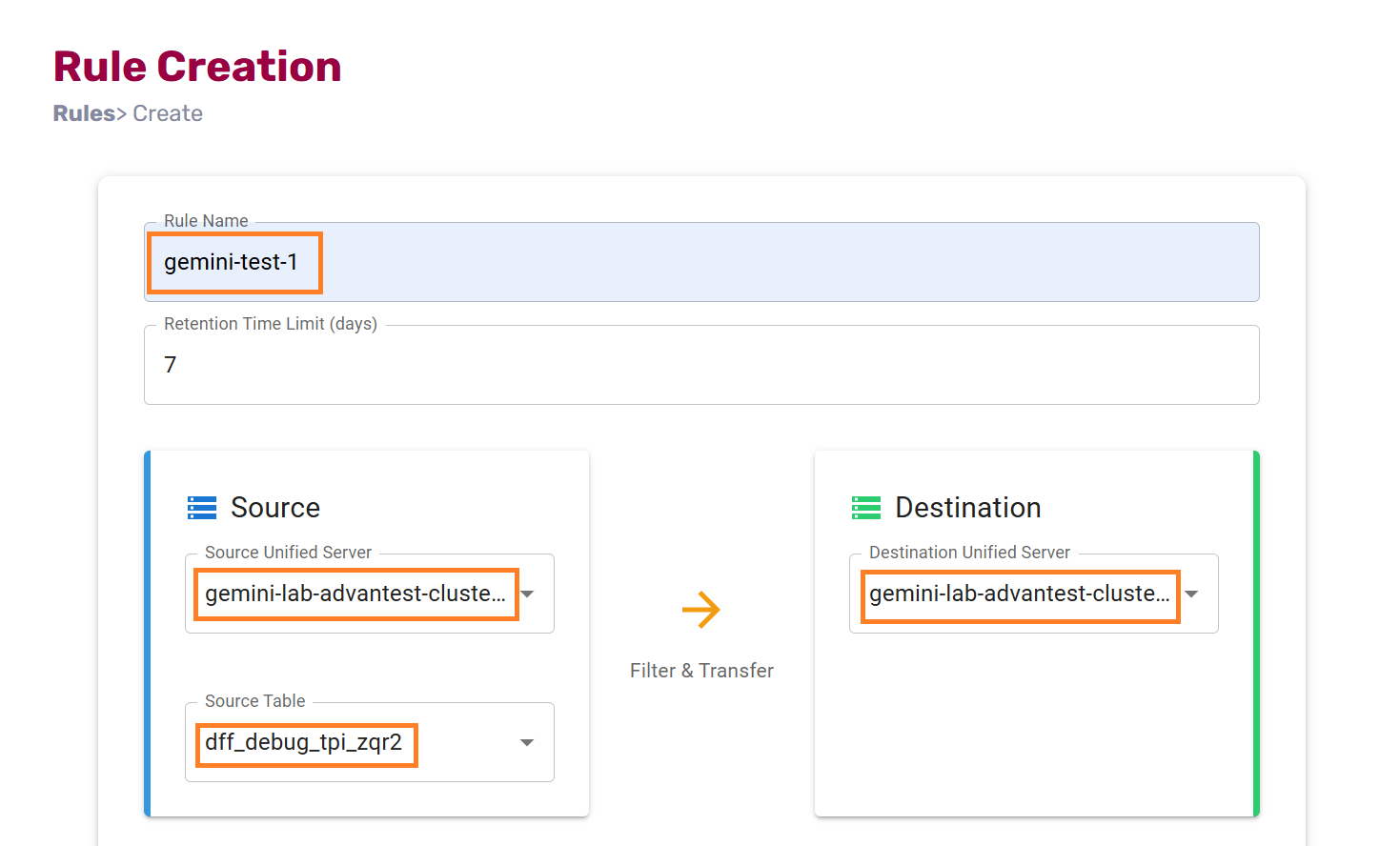

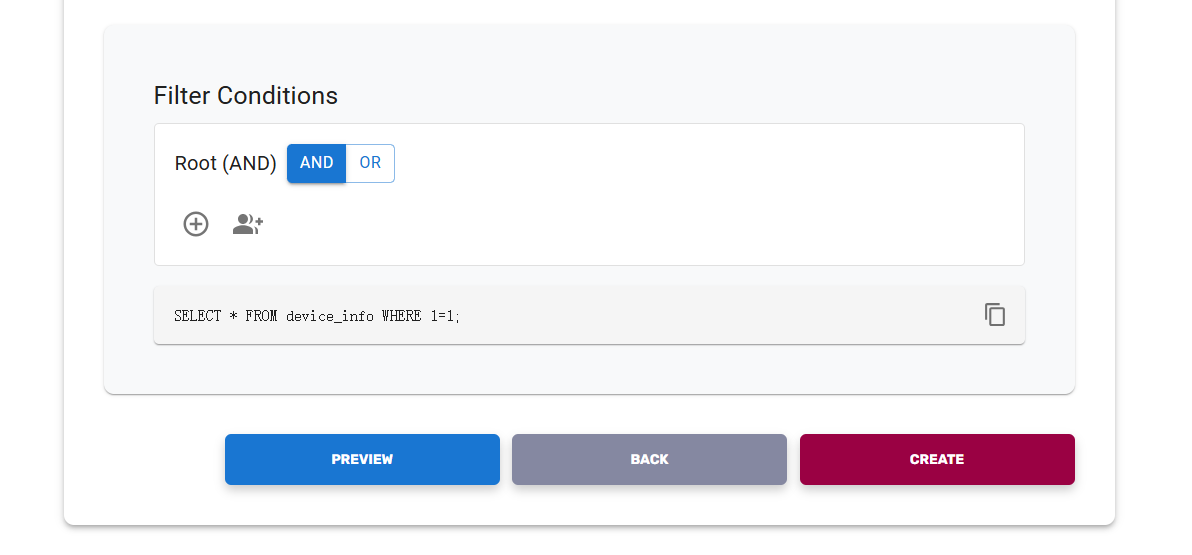

Create data transfer rule on DFF UI#

Login to https://dff.unified.advantest.com/ with your account

Click on “+ Create Rule” button on the “rules” webpage

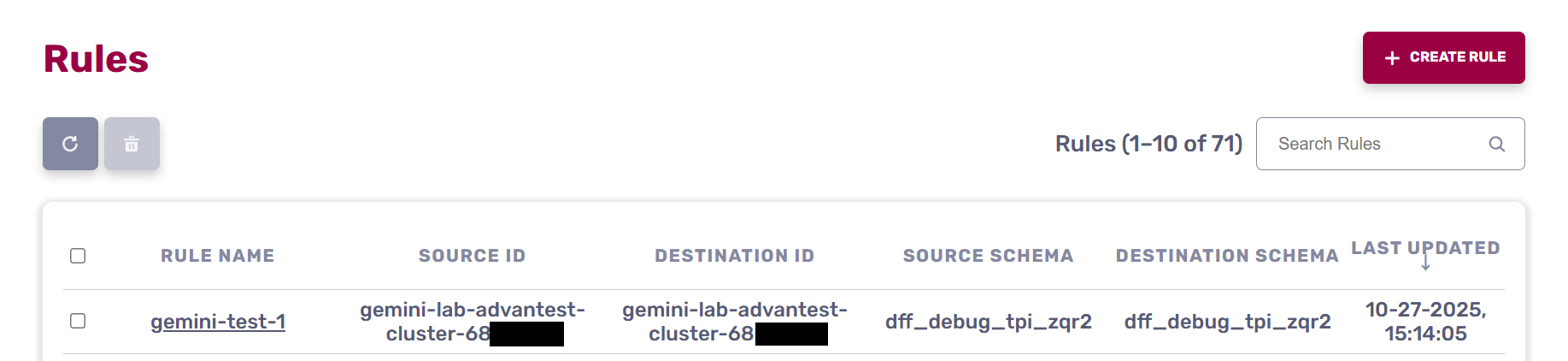

In the “Create Rule” page, enter in rule name, select 1st AUS name in the “Source Unified Server” list, select “dff_debug_tpi_zqr2” source table, select 2nd AUS name in the “Destination Unified Server” list

Click “Create” button

Then you can find the rule is created and in the rule list.

Note, please ensure you have switched to SmarTest8

Running the SmarTest Test Program again on 1st Host Controller#

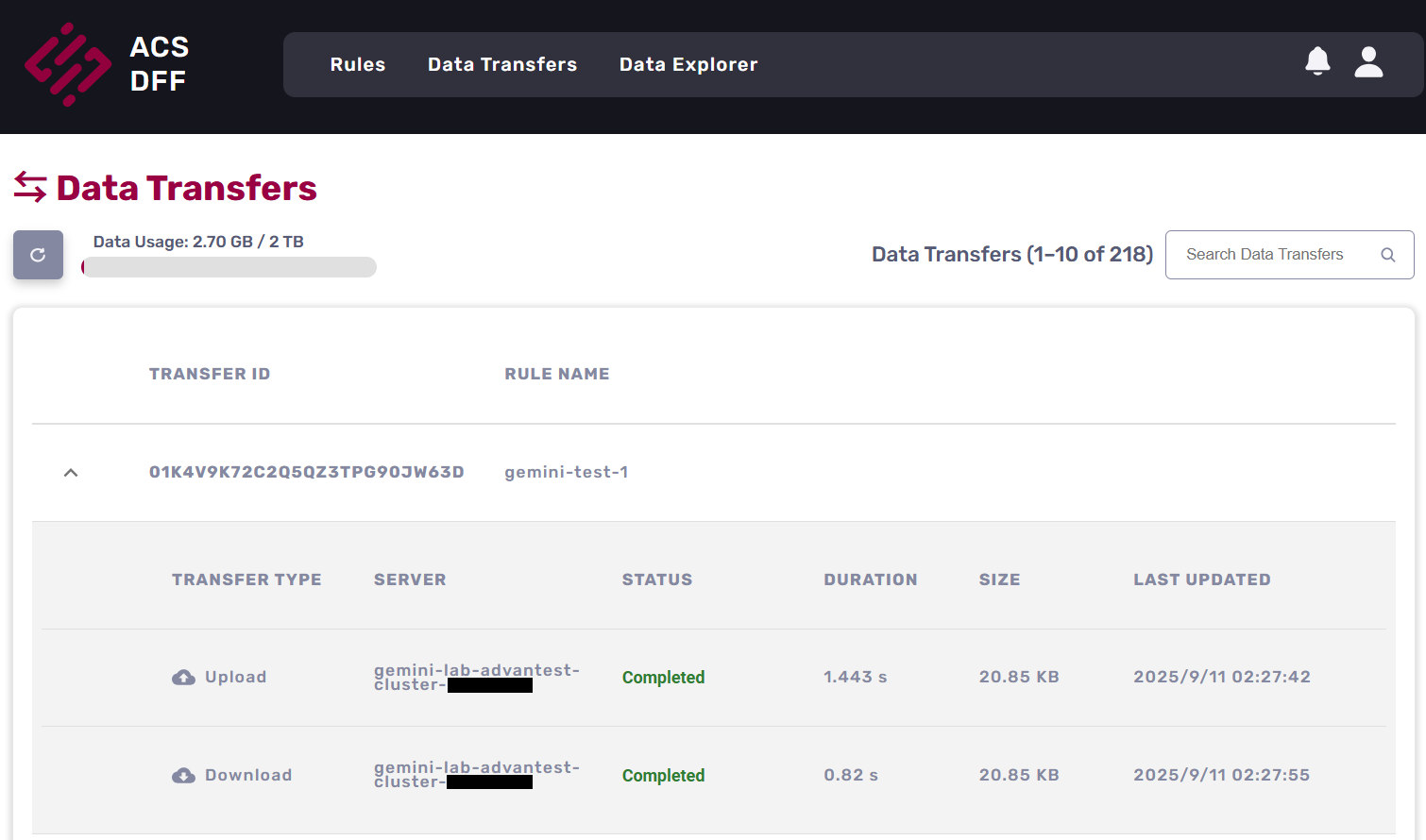

Data Transfer on DFF UI#

Login to DFF UI website, in the “Data Transfers” page, you can confirm the test data is transfered to 2nd AUS

Running the SmarTest Test Program on 2st Host Controller to trigger dff-data-read app running and get the 1st AUS test data#

Start SmarTest8

Run the script that starts SmartTest8:

cd ~/apps/application-dpat-v3.1.0 sh start_smt8.sh

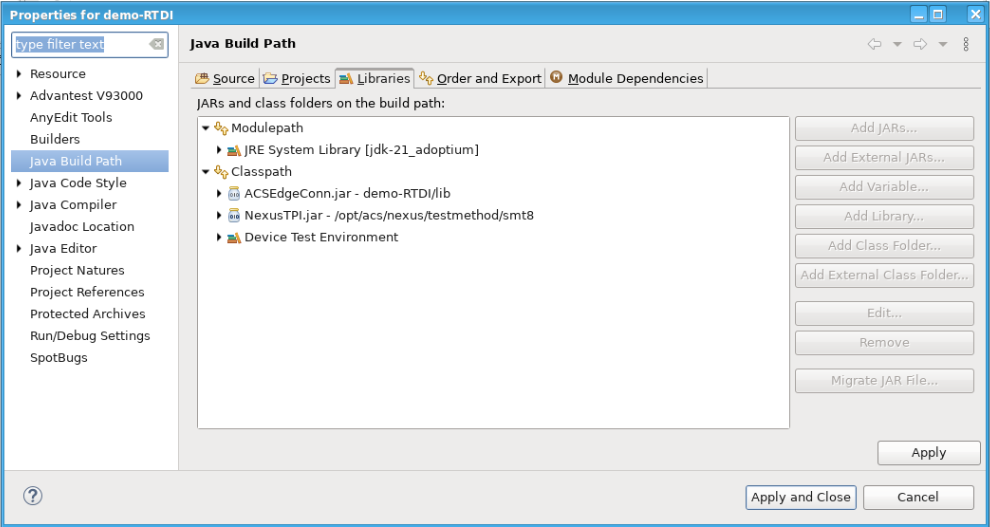

Nexus TPI

You can find “NexusTPI.jar” in the project build path. This dynamic library is used for bi-directional communicating between Test program and Nexus.

Modify code to communicate dff-data-read app on Unified Server.

Replace the file ./apps/application-dpat-v3.1.0/client/SMT8/testprogram/demo-RTDI/tml/misc/measuredValueFileReader.java with the following command:

cd ~ mv ./apps/application-dpat-v3.1.0/client/SMT8/testprogram/demo-RTDI/tml/misc/measuredValueFileReader.java ./apps/application-dpat-v3.1.0/client/SMT8/testprogram/demo-RTDI/tml/misc/measuredValueFileReader.java.old wget -N http://10.44.5.139/app/measuredValueFileReader_dffRead-3.1.0.tar.gz tar zvxf measuredValueFileReader_dffRead-3.1.0.tar.gz

In the file, you can find these codes that implement get DFF from 2nd AUS via TPI request

String jsonRequest = "{\"request\":\"Get_DFF_Data\"}"; // Send Get_DFF_Data instruction to dff-data-read container app to get customer data DFF data res = NexusTPI.request(jsonRequest); System.out.println("Customer Data DFF -> BiDir-request Response= "+res); String response = NexusTPI.getResponse(); System.out.println("Customer Data DFF -> BiDir-getResponse Response= "+response);

The completed code:

Click to expand!

/**

*

*/

package misc;

import java.time.Instant;

import java.util.List;

import java.util.Random;

import org.json.JSONObject;

import base.SOCTestBase;

import nexus.tpi.NexusTPI;

import shifter.GlobVars;

import xoc.dta.annotations.In;

import xoc.dta.datalog.IDatalog;

import xoc.dta.datatypes.MultiSiteDouble;

import xoc.dta.measurement.IMeasurement;

import xoc.dta.resultaccess.IPassFail;

import xoc.dta.testdescriptor.IFunctionalTestDescriptor;

import xoc.dta.testdescriptor.IParametricTestDescriptor;

/**

* ACS Variable control and JSON write demo using SMT8 for Washington

*/

@SuppressWarnings("unused")

public class measuredValueFileReader extends SOCTestBase {

public IMeasurement measurement;

public IParametricTestDescriptor pTD;

public IFunctionalTestDescriptor fTD;

public IFunctionalTestDescriptor testDescriptor;

class params {

private String _upper;

private String _lower;

private String _unit;

public params(String _upper, String lower, String _unit) {

super();

this._upper = _upper;

this._lower = lower;

this._unit = _unit;

}

}

@In

public String testName;

String testtext = "";

String powerResult = "";

int hb_value;

double edge_min_value = 0.0;

double edge_max_value = 0.0;

double prev_edge_min_value = 0.0;

double prev_edge_max_value = 0.0;

boolean c0Flag = false;

boolean c1Flag = false;

boolean dffFlag = true;

String[] ecid_strs = {"U6A629_03_x1_y2", "U6A629_03_x1_y3", "U6A629_03_x1_y4", "U6A629_03_x2_y1", "U6A629_03_x2_y2", "U6A629_03_x2_y6", "U6A629_03_x2_y7", "U6A629_03_x3_y10", "U6A629_03_x3_y2", "U6A629_03_x3_y3", "U6A629_03_x3_y7", "U6A629_03_x3_y8", "U6A629_03_x3_y9", "U6A629_03_x4_y11", "U6A629_03_x4_y1", "U6A629_03_x4_y2", "U6A629_03_x4_y4", "U6A629_03_x5_y0", "U6A629_03_x5_y10", "U6A629_03_x5_y11", "U6A629_03_x5_y3", "U6A629_03_x5_y7", "U6A629_03_x5_y8", "U6A629_03_x6_y3", "U6A629_03_x6_y5", "U6A629_03_x6_y6", "U6A629_03_x6_y7", "U6A629_03_x7_y4", "U6A629_03_x7_y7", "U6A629_03_x8_y2", "U6A629_03_x8_y3", "U6A629_03_x8_y5", "U6A629_03_x8_y7", "U6A629_04_x1_y2", "U6A629_04_x2_y2", "U6A629_04_x2_y3", "U6A629_04_x2_y7", "U6A629_04_x2_y8", "U6A629_04_x2_y9", "U6A629_04_x3_y10", "U6A629_04_x3_y2", "U6A629_04_x3_y3", "U6A629_04_x3_y4", "U6A629_04_x3_y5", "U6A629_04_x3_y7", "U6A629_04_x3_y9", "U6A629_04_x4_y11", "U6A629_04_x4_y2", "U6A629_04_x4_y7", "U6A629_04_x4_y8", "U6A629_04_x4_y9", "U6A629_04_x5_y4", "U6A629_04_x5_y7", "U6A629_04_x6_y5", "U6A629_04_x6_y6", "U6A629_04_x6_y8", "U6A629_04_x7_y10", "U6A629_05_x2_y2", "U6A629_05_x3_y0", "U6A629_05_x4_y1", "U6A629_05_x4_y2", "U6A629_05_x5_y1", "U6A629_05_x5_y2", "U6A629_05_x5_y3", "U6A629_05_x6_y1", "U6A629_05_x6_y3", "U6A629_05_x7_y2", "U6A629_05_x7_y3", "U6A629_05_x8_y3", "U6A633_10_x4_y9", "U6A633_10_x8_y9", "U6A633_11_x2_y2", "U6A633_11_x3_y9", "U6A633_11_x5_y2", "U6A633_11_x6_y10", "U6A633_11_x6_y5", "U6A633_11_x7_y9", "U6A633_11_x8_y8", "U6A633_11_x8_y9", "U6A633_12_x2_y3", "U6A633_12_x2_y5", "U6A633_12_x2_y7", "U6A633_12_x2_y9", "U6A633_12_x3_y1", "U6A633_12_x3_y9", "U6A633_12_x4_y0", "U6A633_12_x5_y1", "U6A633_12_x7_y4"};

int ecid_ptr = 0;

long hnanosec = 0;

List<Double> current_values;

protected IPassFail digResult;

public String pinList;

@Override

public void update() {

/* Overwrite limits provided by the test table */

// pTD.setLowLimit(0.9).setHighLimit(1.1).setTestText("ParametricRXGain").setTestNumber(401);

}

@SuppressWarnings("static-access")

@Override

public void execute() {

int resh;

int resn;

int resx;

IDatalog datalog = context.datalog(); // use in case logDTR does not work

int index = 0;

Double raw_value = 0.0;

String rvalue = "";

String low_limitStr = "";

String high_limitStr = "";

Double low_limit = 0.0;

Double high_limit = 0.0;

String unit = "";

String packetValStr = "";

String sku_name = "";

String command = "";

boolean validResponse = false;

/**

* This portion of the code will read an external datalog file in XML and compare data in

* index 0 to the limit variables received from Nexus

*/

if (testName.contains("RX_gain_2412_C0_I[1]")) {

try {

NexusTPI.location("aus").target("dff-data-read").timeout(100);

Instant hinstant = Instant.now();

GlobVars.hsnanoSeconds = (hinstant.getEpochSecond()*1000000000) + hinstant.getNano();

System.out.println("HealthCheck Start time= "+GlobVars.hsnanoSeconds);

String jsonHRequest = "{\"health\":\"DoHealthCheck\"}";

int hres = NexusTPI.request(jsonHRequest);

System.out.println("BiDir-request Response= "+hres);

String hresponse = NexusTPI.getResponse();

System.out.println("BiDir:: getRequest Health Response:"+hresponse);

Instant instant = Instant.now();

GlobVars.henanoSeconds = (instant.getEpochSecond()*1000000000) + instant.getNano();

System.out.println("HealthCheck Stop time= "+GlobVars.henanoSeconds);

hparse(hresponse);

} catch (Exception e) {

e.printStackTrace();

throw e;

}

}

if ((testName.contains("RX_gain_2412_C0_I[1]")) && (!c0Flag)) {

try {

misc.XMLParserforACS.params key = GlobVars.c0_current_params.get(testName);

} catch (Exception e) {

System.out.println("Missing entry in maps for this testname : " + testName);

return;

}

try {

GlobVars.c0_current_values = new XMLParserforACS().parseXMLandReturnRawValues(testName);

} catch (NullPointerException e) {

System.out.print("variable has null value, exiting.\n");

}

try {

GlobVars.c0_current_params = new XMLParserforACS().parseXMLandReturnLimitmap(testName);

} catch (NullPointerException e) {

System.out.print("variable has null value, exiting.\n");

}

c0Flag = true;

low_limit = Double.parseDouble(GlobVars.c0_current_params.get(testName).getLower());

high_limit = Double.parseDouble(GlobVars.c0_current_params.get(testName).getUpper());

unit = GlobVars.c0_current_params.get(testName).getUnit();

GlobVars.orig_LoLim = low_limit;

GlobVars.orig_HiLim = high_limit;

}

if ((testName.contains("RX_gain_2412_C1_I[1]")) && (!c1Flag)) {

try {

misc.XMLParserforACS.params key = GlobVars.c1_current_params.get(testName);

} catch (Exception e) {

System.out.println("Missing entry in maps for this testname : " + testName);

return;

}

try {

GlobVars.c1_current_values = new XMLParserforACS().parseXMLandReturnRawValues(testName);

} catch (NullPointerException e) {

System.out.print("variable has null value, exiting.\n");

}

try {

GlobVars.c1_current_params = new XMLParserforACS().parseXMLandReturnLimitmap(testName);

} catch (NullPointerException e) {

System.out.print("variable has null value, exiting.\n");

}

c1Flag = true;

low_limit = Double.parseDouble(GlobVars.c1_current_params.get(testName).getLower());

high_limit = Double.parseDouble(GlobVars.c1_current_params.get(testName).getUpper());

unit = GlobVars.c1_current_params.get(testName).getUnit();

}

if (testName.contains("RX_gain_2412_C1_I[1]")) {

index = GlobVars.c1_index;

raw_value = GlobVars.c1_current_values.get(index);

low_limit = Double.parseDouble(GlobVars.c1_current_params.get(testName).getLower());

high_limit = Double.parseDouble(GlobVars.c1_current_params.get(testName).getUpper());

unit = GlobVars.c1_current_params.get(testName).getUnit();

}

if (testName.contains("RX_gain_2412_C0_I[1]")) {

index = GlobVars.c0_index;

raw_value = GlobVars.c0_current_values.get(index);

low_limit = Double.parseDouble(GlobVars.c0_current_params.get(testName).getLower());

high_limit = Double.parseDouble(GlobVars.c0_current_params.get(testName).getUpper());

unit = GlobVars.c0_current_params.get(testName).getUnit();

}

if (testName.contains("RX_gain_2412_C0_I[1]")) {

int res;

try {

System.out.println("ECID["+ecid_ptr+"] = "+ecid_strs[ecid_ptr]);

packetValStr = buildPacketString();

rvalue = String.valueOf(raw_value);

low_limitStr = String.valueOf(low_limit);

high_limitStr = String.valueOf(high_limit);

sku_name = "{\"SKU_NAME\":{\"MSFT_ECID\":\""+ecid_strs[ecid_ptr]+"\",\"testname\":\""+testName+"\",\"RawValue\":\""+rvalue+"\",\"LowLim\":\""+low_limitStr+"\",\"HighLim\":\""+high_limitStr+"\",\"packetString\":\""+packetValStr+"\"}}";

System.out.println("\nJson String sku_name length= "+sku_name.length());

Instant instant = Instant.now();

GlobVars.ssnanoSeconds = (instant.getEpochSecond()*1000000000) + instant.getNano();

System.out.println("TPSend Start Time= "+GlobVars.ssnanoSeconds);

res = NexusTPI.send(sku_name);

Instant pinstant = Instant.now();

GlobVars.senanoSeconds = (pinstant.getEpochSecond()*1000000000) + pinstant.getNano();

System.out.println("TPSend Return Time= "+GlobVars.senanoSeconds);

} catch (Exception e) {

e.printStackTrace();

throw e;

}

try {

long millis = 200;

Thread.sleep(millis);

} catch (InterruptedException e1) {

e1.printStackTrace();

}

try {

Instant ginstant = Instant.now();

GlobVars.rsnanoSeconds = (ginstant.getEpochSecond()*1000000000) + ginstant.getNano();

System.out.println("RqstHostTime TPRqst Get_DFF_Data Start= "+GlobVars.rsnanoSeconds);

String jsonRequest = "{\"request\":\"Get_DFF_Data\"}";

// Send Get_DFF_Data instruction to dff-data-read container app to get customer data DFF data

res = NexusTPI.request(jsonRequest);

System.out.println("Customer Data DFF -> BiDir-request Response= "+res);

String response = NexusTPI.getResponse();

System.out.println("Customer Data DFF -> BiDir-getResponse Response= "+response);

} catch (Exception e) {

e.printStackTrace();

throw e;

}

}

if (validResponse && (testName.contains("RX_gain_2412_C0_I[1]")) && (!GlobVars.limFlag)) {

low_limit = Double.parseDouble(GlobVars.adjlolimStr);

high_limit = Double.parseDouble(GlobVars.adjhilimStr);

}

System.out.println(testName + ": TestingText= " + testtext);

System.out.println(testName + ": low_limit= " + low_limit);

System.out.println(testName + ": high_limit= " + high_limit);

System.out.println("Simulated Test Value Data ");

System.out.println(

"testname = " + testName + " lower = " + low_limit + " upper = " + high_limit

+ " units = " + unit + " raw_value = " + raw_value + " index = " + index);

MultiSiteDouble rawResult = new MultiSiteDouble();

for (int site : context.getActiveSites()) {

rawResult.set(site, raw_value);

}

/** This performs datalog limit evaluation and p/f result and EDL datalogging */

pTD.setHighLimit(high_limit);

pTD.setLowLimit(low_limit);

pTD.evaluate(rawResult);

if (testName.contains("RX_gain_2412_C0_I[1]")) {

if (GlobVars.c0_index < (GlobVars.c0_current_values.size() - 1)) {

GlobVars.c0_index++;

} else {

GlobVars.c0_index = 0;

}

}

if (testName.contains("RX_gain_2412_C1_I[1]")) {

if (GlobVars.c1_index < (GlobVars.c1_current_values.size() - 1)) {

GlobVars.c1_index++;

} else {

GlobVars.c1_index = 0;

}

}

if (pTD.getPassFail().get()) {

System.out.println(

"Sim Value Test " + testName + "\n************ PASSED **************\n");

} else {

System.out.println(testName + "\n************ FAILED *****************\n");

}

if (testName.contains("RX_gain_2412_C0_I[1]")) {

// HTTP performance data: HealthCheck, POST, GET transactions

System.out.println("************ Nexus BiDir Performance Data ******************");

System.out.println("HealthCheck: Host to App Time= "+GlobVars.perf_times.get("Health_h-a_time"));

System.out.println("HealthCheck: App to Host Time= "+GlobVars.perf_times.get("Health_a-h_time"));

System.out.println("HealthCheck: Round-Trip Time= "+GlobVars.perf_times.get("Health_rtd_time"));

System.out.println("TPSend: Host to App Time= "+GlobVars.perf_times.get("Send_h-a_time"));

System.out.println("TPSend: App to Host Time= "+GlobVars.perf_times.get("Send_a-h_time"));

System.out.println("TPSend: Round-Trip Time= "+GlobVars.perf_times.get("Send_rtd_time"));

System.out.println("TPRequest: Host to App Time= "+GlobVars.perf_times.get("Request_h-a_time"));

System.out.println("TPRequest: App to Host Time= "+GlobVars.perf_times.get("Request_a-h_time"));

System.out.println("TPRequest: Round-Trip Time= "+GlobVars.perf_times.get("Request_rtd_time"));

System.out.println("*****************************************************");

}

// Clear large strings used for http post

packetValStr = "";

sku_name = "";

ecid_ptr++;

if (ecid_ptr >= ecid_strs.length) {

ecid_ptr = 0;

}

}

public static String parse(String responseBody) {

if (responseBody.contains("MSFT_ECID")) {

JSONObject results = new JSONObject(responseBody);

String ecid = results.getString("MSFT_ECID");

System.out.println("MSFT_ECID: " + ecid);

String testName = results.getString("testname");

System.out.println("testname: " + testName);

GlobVars.adjlolimStr = results.getString("AdjLoLim");

System.out.println("AdjLoLim: " + GlobVars.adjlolimStr);

GlobVars.adjhilimStr = results.getString("AdjHiLim");

System.out.println("AdjHiLim: " + GlobVars.adjhilimStr);

String sndappTime = results.getString("SendAppTime");

System.out.println("SendAppTime: " + sndappTime);

String sretappTime = results.getString("SendAppRetTime");

System.out.println("SendAppRetTime: " + sretappTime);

String rappTime = results.getString("RqstAppTime");

System.out.println("RqstAppTime: " + rappTime);

String rretappTime = results.getString("RqstAppRetTime");

System.out.println("RqstAppRetTime: " + rretappTime);

long atime = Long.parseLong(sndappTime);

long artime = Long.parseLong(sretappTime);

long ratime = Long.parseLong(rappTime);

long rartime = Long.parseLong(rretappTime);

double deltaSndTime = (atime - GlobVars.ssnanoSeconds)/1000000.0; // Delta time in msec

GlobVars.perf_times.put("Send_h-a_time", deltaSndTime);

System.out.println("Delta TPSend h-a app-host time: "+deltaSndTime+" msec");

double deltaSndRetTime = (GlobVars.senanoSeconds - artime)/1000000.0; // Delta time in msec

GlobVars.perf_times.put("Send_a-h_time", deltaSndRetTime);

System.out.println("Delta TPSend a-h time: "+deltaSndRetTime+" msec");

double sendDeltaTime = (GlobVars.senanoSeconds - GlobVars.ssnanoSeconds)/1000000.0; // Delta time in msec

GlobVars.perf_times.put("Send_rtd_time", sendDeltaTime);

System.out.println("Delta TPSend transaction time: "+sendDeltaTime+" msec");

double deltaRqstTime = (ratime - GlobVars.rsnanoSeconds)/1000000.0; // Delta time in msec

GlobVars.perf_times.put("Request_h-a_time", deltaRqstTime);

System.out.println("Delta TPRequest h-a time: "+deltaRqstTime+" msec");

double deltaRqstRetTime = (GlobVars.renanoSeconds - rartime)/1000000.0; // Delta time in msec

GlobVars.perf_times.put("Request_a-h_time", deltaRqstRetTime);

System.out.println("Delta TPRequest a-h time: "+deltaRqstRetTime+" msec");

double gadeltaTime = (GlobVars.renanoSeconds - GlobVars.rsnanoSeconds)/1000000.0; // Delta time in msec

GlobVars.perf_times.put("Request_rtd_time", gadeltaTime);

}

return responseBody;

}

public static String hparse(String responseBody) {

String hatime = "0";

if (responseBody.contains("health")) {

JSONObject results = new JSONObject(responseBody);

hatime = results.getString("health");

long hatimeval = Long.parseLong(hatime);

System.out.println("health: " + hatime);

double hsdeltaTime = (hatimeval - GlobVars.hsnanoSeconds)/1000000.0; // Delta time in msec

double hrdeltaTime = (GlobVars.henanoSeconds - hatimeval)/1000000.0; // Delta time in msec

double rhdeltaTime = (GlobVars.henanoSeconds - GlobVars.hsnanoSeconds)/1000000.0; // Delta time in msec

GlobVars.perf_times.put("Health_h-a_time", hsdeltaTime);

GlobVars.perf_times.put("Health_a-h_time", hrdeltaTime);

GlobVars.perf_times.put("Health_rtd_time", rhdeltaTime);

System.out.println("Delta health h-a time: "+hsdeltaTime+" msec");

System.out.println("Delta health a-h time: "+hrdeltaTime+" msec");

System.out.println("Delta health rtd time: "+rhdeltaTime+" msec");

}

return hatime;

}

public String buildPacketString() {

int packetSel = GlobVars.packetSelector;

int[] cnt_values = { 203, 1000, 10000, 100000, 1000000,

2000000, 3000000, 4000000, 5000000,

6000000, 7000000, 8000000, 9000000,

10000000, 20000000, 40000000, 60000000, 80000000, 100000000,

120000000, 140000000, 160000000, 180000000, 200000000, 220000000,

240000000, 260000000, 280000000, 300000000};

System.out.println("PacketID: " + packetSel);

String pktStr = "";

int chrcnt = cnt_values[packetSel] - 200; // was 146;

System.out.println("Chrcnt: " + chrcnt);

String valstr = "";

String BUILDCHARS = "ABCDEFGHIJKLMNOPQRSTUVWXYZ1234567890";

StringBuilder packetstr = new StringBuilder();

Random rnd = new Random();

while (packetstr.length() < chrcnt) { // length of the random string.

int index = (int) (rnd.nextFloat() * BUILDCHARS.length());

packetstr.append(BUILDCHARS.charAt(index));

}

pktStr = packetstr.toString();

GlobVars.packetStr = pktStr;

return pktStr;

}

}

Visualize Results#

In the EWC console, you can find these logs including the DFF data from AUS1 is printed.

Customer Data DFF -> BiDir-request Response= 0

Customer Data DFF -> BiDir-getResponse Response= {"x_coord":"-32768","optional_flag":"","head_number":"1","units":"V","high_spec":"0.0","lowlimit_scaling":"0","result":"55.9567985535","test_text":"Parametric","low_spec":"0.0","lot_id":"","low_limit_format":"","part_text":"","save_at":"1758001024067","low_limit":"40.0","high_limit":"70.0","part_id":"2","test_flag":"00","result_scaling":"0","param_flag":"00","test_suite":"Main.RXGainTestSuite","wafer_id":"None","result_format":"","highlimit_scaling":"0","measurement_name":"","y_coord":"-32768","alarm_id":"","site_number":"2","high_limit_format":"","test_number":"50105902"}

- About this tutorial

- Compatibility and Prerequisites

- Environment Preparation

- Create Essential VMs for RTDI

- Configure 1st Unified Servers as DFF data transfer agent

- Configure 2nd Unified Servers as DFF data consumer server

- Transfer demo program to each Host Controller

- Create Docker image for dff-data-read app in the 2nd Host Controller

- Create App descriptor project on the Container Hub

- Replicate the new created App Descriptor to 2nd Unified Server on Habor

- Submit feedback for DFF from AUS1 to AUS2 request.

- Configure acs_nexus service in each Host Controller

- Running the SmarTest Test Program on 1st Host Controller to generate test data and trigger DFF data transferring

- Create data transfer rule on DFF UI

- Running the SmarTest Test Program again on 1st Host Controller

- Data Transfer on DFF UI